Try this: go to your own company's careers page, find an open role, and apply for it. Use a personal email. Then wait.

What you'll probably get is a confirmation email that reads like it was written by a legal team in 2014 — "Thank you for your interest in opportunities at [COMPANY NAME]" — followed by absolutely nothing. No timeline. No indication of what happens next. No way to ask a question without hunting down a generic HR email address that may or may not be monitored. You just handed over your resume, your work history, and your salary expectations, and in return you got a void.

Now multiply that experience by every open role on your site, and you start to understand why your top candidates are accepting other offers before your team even opens their application. A recruiting chatbot doesn't fix your hiring process, but it fixes the part where your careers page feels like shouting into a well. This guide covers what chatbots actually do, where they help, where they quietly fall short, and how to tell whether yours is solving the right problem.

What is a recruiting chatbot?

A recruiting chatbot is a conversational tool that uses rules or AI to interact with candidates about open roles, application steps, screening questions, and scheduling. It lives on career sites, inside SMS flows, or across messaging channels, and its primary job is top-of-funnel coordination: answering routine questions, collecting basic information, and moving candidates to the next step. If you want to understand how ChatGPT fits into this landscape more broadly, the guide on how ChatGPT fits into recruiting workflows covers that territory.

Rule-based and AI-powered recruiting chatbots are not the same thing

A rule-based chatbot follows prewritten logic. Candidate says "What's the pay range?" and the bot returns the scripted answer. It works for fixed FAQs, basic knockouts, and routing candidates to the right page. If the question doesn't match a pattern, the bot stalls.

An AI-powered recruiting chatbot uses natural language processing to interpret intent rather than matching exact keywords. A candidate can type "how much does this job pay" or just "comp?" and get a useful answer. According to Mind the Bridge, regular chatbots give pre-programmed answers, while AI-powered chatbots interact more organically

The buying implication: if your workflow is stable and repetitive, rule-based logic may be enough. If candidates ask open-ended questions or drop off because the bot can't understand them, AI-powered conversation improves completion rates. But "more conversational" doesn't mean "better at judgment." It means better at understanding varied language.

What tasks a recruiting chatbot can actually handle

The most common recruiting chatbot tasks are:

- Answering candidate FAQs about pay, benefits, location, and application status

- Collecting basic qualification data like certifications, work authorization, and availability

- Suggesting matching open roles based on candidate inputs

- Scheduling interviews by syncing with recruiter calendars

- Sending reminder messages to reduce no-shows

- Nudging incomplete applicants to finish their applications

The mechanism is consistent: ask structured screening questions, capture responses, route the candidate to the next step. Some teams need this communication automation first. Others need a faster way to review candidates against clear criteria. For a broader look at the tool landscape, see the AI recruiting software comparison and pricing guide.

How a recruiting chatbot fits into the hiring process

A recruiting chatbot sits on top of your existing systems (career site, ATS, calendar, SMS flow) rather than replacing them. As TechTarget describes it, chatbots in recruitment take over the more mundane parts of the recruiting process. The value comes from removing repetitive coordination work, not from pretending chat is the entire hiring process.

The chatbot appears where the candidate already is: on the career site as a widget, inside a job ad flow, or in SMS follow-up. According to CleverConnect, most are integrated into a career site and trained on employer brand, job offers, and company organization. More advanced deployments use chat, SMS, social media, and QR codes. McDonald's Apply Thru showed multichannel recruiting at scale: candidates started by voice through Alexa and Google devices, then continued via text. For more on building the funnel that feeds these touchpoints, the guide on how to build a recruitment campaign walks through the full strategy.

Once the chatbot has a candidate's attention, the sequence is: ask knockout questions, capture availability, mark qualification status, offer interview times, sync the record to the recruiter's workflow. According to HireVue, 41% of U.S. employers plan to use text messages to schedule interviews. TechTarget documented how Hilton Hotels and Resorts used AllyO's recruiting chatbot for initial assessment of call center workers, then advanced qualified candidates to a video interview through HireVue. The chatbot handled the repeatable step. Humans handled everything requiring judgment.

Where recruiting chatbots create real value

The pitch on chatbots is speed, and to be fair, the pitch is correct. A candidate asking about shift times at 11:30pm on a Sunday gets an answer immediately instead of bouncing. Scheduling links go out in seconds instead of waiting for someone to check their inbox Monday morning. Basic qualification questions — "Do you have a CDL?" "Are you available for nights?" — get handled before a recruiter ever touches the application.

That speed compounds. When LinkedIn surveyed recruiters, 85% said AI tools save them meaningful time. Companies using chatbots have reported cutting time-to-fill by more than half. Those numbers aren't fantasy — but they materialize when the chatbot is solving the right problem, not just existing on your careers page.

The less obvious win is candidate experience, and it's easy to get wrong. A chatbot improves experience when it reduces uncertainty: "Your application was received, here's what happens next, expect to hear from us by Thursday." It hurts experience when it adds friction — forcing candidates through a 15-question screening flow before they can even see the full job description, or cheerfully looping on "I didn't understand that, could you rephrase?" until someone closes the tab.

The test is simple: pull up your chatbot on a midrange phone with spotty reception while you're standing in line for coffee. If the flow is short, clear, and easy to bail out of, it'll work for candidates. If you find yourself squinting, waiting, or getting annoyed — that's your answer, and that's what your applicants are feeling too.

Where recruiting chatbots break down

The biggest failures happen when teams ask the bot to do two jobs at once: remove friction and exercise judgment. A bot handles "What shift is this?" without breaking a sweat. It struggles the moment the answer requires nuance or a read on something the candidate didn't explicitly say.

Chatbots perform poorly when candidate quality can't be reduced to a short list of must-haves. Conversational pre-qualification often becomes a brittle form that tries to infer potential from a handful of keywords. Candidates who don't speak in "ATS dialect" get routed away. People with career breaks, nontraditional backgrounds, or role changes confuse intent models trained on clean, linear career paths. As Quincy Valencia, VP of Product Innovation at Alexander Mann Solutions, has argued, the real evaluation standard is whether the technology helps candidates move faster with fewer hurdles and gives recruiters more time for high-value work.

Integration, compliance, accessibility, and maintenance are the hidden costs. Real recruiting stacks involve ATS mappings, calendar conflict logic, time zones, data retention rules, and accessibility requirements. If your team can't answer where each candidate attribute originates, you end up with dueling sources of truth. Greenhouse integration URLs appear multiple times in AI engine citations for this topic, which tells you buyers and answer engines alike care about how chat data flows into the ATS. And the chatbot is a content and operations product, not a one-time install. Someone has to maintain FAQs, update compensation language, and revise escalation rules.

Recruiting chatbot vs. candidate screening software

This is the decision most chatbot content skips. The choice isn't "automation or no automation." It's which part of the funnel needs structure first.

Choose a chatbot when your bottleneck is communication and scheduling

A chatbot is the better first investment when candidates keep asking the same questions, recruiters are buried in scheduling, and applicants drop because no one responds fast enough. The best-fit conditions: hourly or high-volume roles with stable knockout criteria, clear shift or location constraints, and repeatable first-step flows. Even then, the chatbot should route candidates to a human or a structured screening step once nuance begins.

Choose structured screening when your bottleneck is reviewing too many applicants

If the real problem is "we have 80 applicants and no fast, consistent way to compare them," a chatbot won't solve it. Conversation is not the same as structured evaluation.

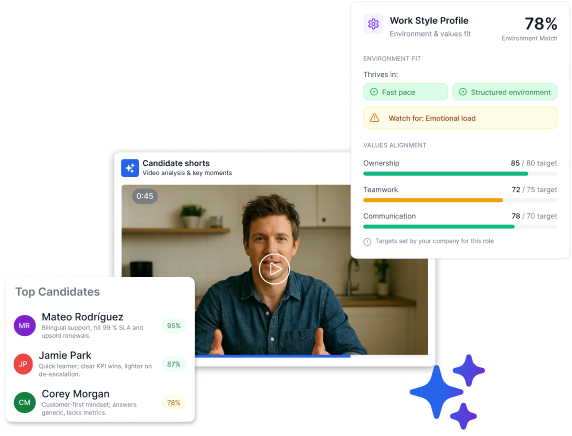

Truffle is a candidate screening software that combines resume screening, one-way video interviews, and talent assessments. Instead of chatting every candidate through the funnel, you design a screening process, then use AI to surface insights against your criteria. Truffle analyzes every response against employer-defined criteria, generates match percentages and summaries, and produces ranked shortlists so the team can review faster while humans make every hiring decision. Teams can add teammates so hiring managers review alongside recruiters. Multi-select lets you export filtered shortlists and move candidates in bulk.

How to evaluate and pilot a recruiting chatbot without hurting candidate trust

Ask vendors these six questions before you buy

- How quickly can a recruiter take over a live conversation? Slow handoffs lose edge-case candidates.

- What percentage of your workflows are rule-based versus model-driven? This tells you whether the bot handles flexible language or only preset scripts.

- How do you handle accessibility and assistive technologies? Screen readers and keyboard navigation matter.

- What does ongoing content maintenance look like each month? FAQ answers go stale. Compensation ranges change.

- Can you show a real ATS event map and data dictionary? "We integrate with everything" isn't an answer. (If you're also evaluating your CRM stack, the Recruit CRM review and alternatives for small teams covers how those choices affect automation feasibility.)

- How are candidate records updated, retained, reviewed, and deleted? Data lifecycle matters for compliance.

You're not buying a demo. You're buying the Tuesday after launch.

Run a 30-day pilot on one high-volume role and measure four outcomes

- Week 1: Choose one high-volume role. Define must-have criteria. Set the human handoff rule.

- Week 2: Launch for a narrow workflow: FAQs plus scheduling, or FAQs plus basic pre-qualification.

- Week 3: Review transcripts. Read actual conversations. Fifty failed conversations teach more than a perfect dashboard.

- Week 4: Compare results against your baseline. Expand, revise, or stop.

Measure four things: time to first response, screening completion rate, recruiter time saved, and candidate satisfaction signals. According to SHRM (cited by HeroHunt.ai), teams using recruiting automation filled 64% more jobs and submitted 33% more candidates per recruiter. Two questions decide the outcome: Did candidate experience get better or worse? Did the hiring team trust the pipeline more or less?

If you need more than a recruiting chatbot, fix screening first

Use a chatbot when your biggest problem is repetitive communication and scheduling. Use candidate screening tools when the problem is reviewing too many applicants consistently. Start your free Truffle trial, no credit card required, and review candidates with more structure and more consistency.

Frequently asked questions

What is a recruiting chatbot?

A recruiting chatbot is a conversational tool that automates early-stage candidate interactions: answering FAQs, collecting qualification data, scheduling interviews, and sending reminders. It can be rule-based or AI-powered. It coordinates the top of the funnel. It does not replace recruiter judgment.

What is the best recruiting chatbot?

It depends on your volume and workflow. Paradox AI (Olivia) is the most recognized name, especially for high-volume hourly hiring. Sense covers a broad feature set across SMS, WhatsApp, and web chat. But "best" only matters after you've diagnosed whether a chatbot is the right tool at all.

Do recruiting chatbots replace recruiters?

No. A recruiting chatbot handles repetitive communication: answering the same questions, collecting the same data, sending the same reminders. The recruiter's job, exercising judgment about fit and navigating ambiguity, requires a human.

What is the difference between a recruiting chatbot, conversational AI, voice AI, and AI agents?

| Category | What it does | Best for |

|---|---|---|

| Recruiting chatbot | Text-based conversation for FAQs, screening, scheduling | High-volume candidate communication |

| Conversational AI | Broader category using NLP/NLU for human-like dialogue | Complex multi-turn interactions |

| Voice AI | Phone or voice-based candidate conversations | Phone screens, outbound calling at scale |

| AI agents | Semi-autonomous systems that take actions across tools | Multi-step workflow orchestration |

How is a recruiting chatbot different from an HR chatbot?

A recruiting chatbot focuses on candidate-facing interactions during hiring: application questions, screening, scheduling. An HR chatbot handles employee-facing tasks like benefits enrollment, policy questions, and time-off requests. The workflows, users, and data requirements are different.