You added a hiring assessment to your screening process. Completion rates dropped 40%. Your strongest candidates ghosted. Your hiring manager asked why you're making people take a personality quiz for a warehouse role.

The problem wasn't assessments. It was the wrong assessment for the wrong role at the wrong time.

Hiring assessments work when they're matched to the signal you're actually missing. A cognitive test tells you something different than a situational judgment exercise. A personality profile answers different questions than a skills test. And a 45-minute assessment battery before a candidate has even spoken to a human is a different experience than a 10-minute check after an initial screen. Most teams treat assessments as interchangeable. They're not. The type, the timing, and the length all have to match the role and the gap in your evidence.

What hiring assessments actually measure (and what they don't)

The phrase "hiring assessments" covers at least five distinct types of evaluation. Each one captures a different signal. Treating them as interchangeable is where most screening processes break.

Cognitive ability tests

These measure reasoning, problem-solving, and learning speed. They're among the most researched types of hiring assessment tests, with decades of published data behind them. They're useful for roles where someone needs to process new information quickly. They're less useful for roles where the work is routine and well-documented.

The limitation: cognitive tests tell you how someone thinks in the abstract. They don't tell you how they'll handle a specific situation on your team.

Personality assessments

Personality assessments based on validated research (like the Big Five/IPIP framework) measure traits like conscientiousness, agreeableness, and openness. They surface tendencies, not types. A candidate isn't "an introvert" or "an extrovert." They fall somewhere on a spectrum.

The signal here is alignment, not judgment. You're looking at whether someone's working style fits how your team operates. Results are conversation starters for interviews, not pass/fail gates. If you're using a pre-employment assessment tool that labels candidates as personality "types," that's a red flag about the tool, not the candidate.

Situational judgment tests

SJTs present candidates with realistic scenarios and ask how they'd respond. The employer defines what "good" looks like. There's no universal right answer. That's the point.

This makes SJTs harder to game than most other employment assessments. Every employer's preferred approach is different, so there's no cheat sheet. They're especially useful for roles where judgment matters more than technical knowledge: customer-facing positions, management roles, anything where "it depends" is the honest answer to most questions.

Skills and technical tests

Coding challenges, writing samples, data analysis exercises. These measure whether someone can do the specific work the role requires. They're the most face-valid assessment type. Candidates understand why they're being tested, which helps with completion rates.

The risk: technical tests are increasingly easy to cheat with AI. A candidate can paste your coding challenge into ChatGPT and return a passing solution in minutes. This doesn't mean skills tests are dead. It means you need to pair them with other signals that are harder to fake, or design tests that require real-time problem-solving.

Environment fit assessments

These surface whether a candidate's preferences match the reality of the role. Work schedule, pace, management style, team structure. The goal isn't to judge preferences. It's to set accurate expectations on both sides.

Research on realistic job previews suggests that when candidates know what they're walking into, early turnover drops. That's a different claim than "this assessment will predict who succeeds." It's about reducing surprises, not predicting outcomes.

How to match hiring assessments to the role

Picking the right assessment starts with a simple question: what signal are you missing?

If your screening process already includes resume review and interviews, you probably have a read on credentials and communication. What you might be missing is how someone thinks under pressure, whether their work style fits your environment, or whether they can actually do the technical work.

High-volume, low-resume-signal roles

Warehouse associates. Customer service reps. Retail staff. These roles get hundreds of candidates per posting, and resumes tell you almost nothing about who will actually show up and perform.

For high-volume hiring, keep assessments short (under 15 minutes) and focused on the signal that matters most. An environment fit check that surfaces whether someone actually wants to work nights is more useful than a cognitive battery. An SJT with three customer scenarios tells you more than a personality quiz.

Specialized or technical roles

Software engineers. Data analysts. Financial modelers. Here, the resume gives you a credential signal, but you need proof of capability.

A skills-first hiring approach works well for these roles, but the assessment has to match the actual work. A generic coding challenge tests algorithm knowledge, not whether someone can debug your codebase. Design the test around the real work, keep it to 30-60 minutes, and tell candidates exactly what you're measuring.

Management and leadership positions

Judgment matters more than technical skill. SJTs and personality assessments are more useful here than skills tests. You want to know how someone handles ambiguity, gives feedback, and prioritizes under pressure.

Pair assessments with one-way interview questions that ask candidates to walk through real decisions. The combination of how they think (assessment) and how they communicate (interview) gives you a much richer picture than either one alone.

The hiring assessment mistakes that make candidates disappear

Assessments don't just fail when they measure the wrong thing. They fail when the candidate experience is bad enough that good people walk away.

Too long, too early

One recruiter on Reddit put it this way: "We're asking them to take a 45-minute long test before they can get an interview or meet with a person or get the job offer. So I think there's a trust/commitment issue."

That's exactly right. Pre-hire assessments work best after a candidate has some investment in the process. A 10-minute check after an initial screen feels reasonable. A 45-minute battery before they've talked to anyone feels like homework from a stranger.

No context or explanation

Candidates who understand why they're being assessed complete at higher rates. "We use this to make sure your work style fits our environment" is a reason. "Please complete this assessment to proceed" is a command.

Tell candidates what you're measuring, how long it takes, and how you'll use the results. Transparency isn't just good candidate experience (and you should be surveying that experience). It also improves the quality of responses because candidates stop trying to game something when they understand there's no trick.

Irrelevant content

A personality assessment for a role where personality barely matters. A cognitive test for a position where the work is procedural. When the assessment doesn't connect to the role, candidates notice. And they leave.

The fix: map every assessment to a specific signal you can't get from the resume or interview. If you can't name the signal, you don't need the assessment.

How to use hiring assessments without adding friction

The best assessment strategy isn't about finding the most sophisticated test. It's about getting to your shortlist faster with evidence you trust.

Combine assessments with other screening signals

Assessments in isolation are limited. A personality profile without context is just a data point. But assessment data sitting next to resume qualifications and interview responses creates a candidate view that's genuinely useful.

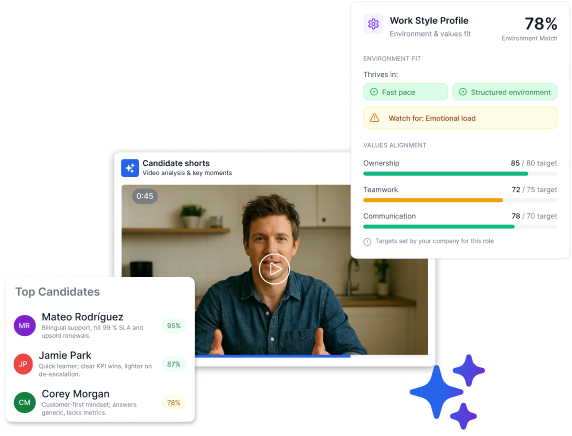

This is where Truffle's approach fits. Truffle is a candidate screening platform that combines talent assessments, one-way video interviews, and resume screening. Use any on its own or combine all three. You can layer in a Personality assessment (based on validated Big Five research), a Situational Judgment Test that scores against your team's preferred approach, or an Environment Fit assessment that surfaces whether someone's preferences match the reality of the role.

The results sit next to interview responses and resume data in one candidate profile. They're diagnostic, not dispositive. Gaps between candidate preferences and role reality become talking points for your live interviews, not automatic disqualifiers.

Keep it short

Under 15 minutes for high-volume roles. Under 30 for specialized positions. If your hiring assessment tests take longer than that, you're testing patience, not capability.

Make timing intentional

The recruitment funnel has stages for a reason. Assessments fit best after initial qualification (resume screen or brief intro) but before the investment of a live interview. This way, candidates have enough context to take the assessment seriously, and you get the signal before you spend 30-60 minutes of interview time.

Let AI handle the analysis, not the decisions

AI can score assessment responses against your criteria consistently across hundreds of candidates. It can surface patterns and rank alignment. What it can't do is decide who to hire. That's your call.

Truffle's AI analyzes assessment results and surfaces match scores that show how closely each candidate aligns with your criteria. You see exactly why someone scored the way they did. The scoring is transparent and explainable. AI handles transcription, scoring, and ranking. You handle the conversations and judgment calls.

This matters because the talent acquisition AI space is full of tools that promise to "identify top candidates" or "predict performance." Those are overclaims. What you actually want is consistent analysis that helps you prioritize your review. AI surfaces. You decide.

Design your own process

Not every role needs every type of assessment. A customer service position might need an SJT and an environment fit check. An engineering role might need a technical test and a personality profile. A management position might need an SJT and a one-way video interview.

With Truffle, you design the screening workflow that fits the role. Resumes and assessments. Interviews and assessments. All three. The platform doesn't force a sequence. You build what makes sense at $149/month ($99/month with annual billing), and every plan includes talent assessments, AI analysis, and Candidate Shorts.

FAQ

What are the most common types of hiring assessments?

The five main types are cognitive ability tests (reasoning and problem-solving), personality assessments (working style and traits), situational judgment tests (how candidates handle realistic scenarios), skills tests (job-specific technical ability), and environment fit assessments (preference alignment with role realities). Each captures a different signal. The right choice depends on the role and what information your candidate screening process is missing.

How long should a hiring assessment take?

Under 15 minutes for high-volume roles. Under 30 minutes for specialized positions. Anything longer risks candidate drop-off without proportionally better signal. If you need more data, it's better to split assessments across stages than to front-load everything.

Do hiring assessments actually improve quality of hire?

Assessments improve hiring decisions when they're matched to the role and used as one input alongside other evidence. They don't predict success on their own. They surface alignment between what a candidate brings and what the role requires. Used well, they reduce surprises. Used poorly (wrong type, wrong stage, too long), they just reduce your candidate pool.

Can candidates cheat on hiring assessments with AI?

Skills tests and cognitive assessments are increasingly vulnerable to AI-assisted responses. Personality and environment fit assessments are harder to game because there's no universal right answer. SJTs where the employer defines the preferred approach are also resistant to gaming. The best defense is combining multiple types of candidate screening methods so no single signal carries all the weight.

When in the hiring process should you use assessments?

After initial qualification but before live interviews. The candidate should have enough context to take the assessment seriously (they've seen the role, maybe had a brief screen), and you should get the data before investing 30-60 minutes of interview time. Sending a 45-minute assessment before any human contact is the fastest way to lose good candidates.

The bigger picture

The conversation about hiring assessments is shifting. It used to be about finding the most rigorous test. Now it's about finding the right combination of signals for each role, delivered in a way that respects the candidate's time.

The companies getting this right aren't adding more tests. They're building screening workflows where resume data, interview responses, and assessment results sit in one view. Where each piece of evidence answers a question the others can't. Where the goal isn't to test more, but to learn what you need to know before the first live conversation.

That's not a technology problem. It's a design problem. And the teams that solve it first will spend less time screening and more time talking to people who are actually worth talking to.

The TL;DR

You added a hiring assessment to your screening process. Completion rates dropped 40%. Your strongest candidates ghosted. Your hiring manager asked why you're making people take a personality quiz for a warehouse role.

The problem wasn't assessments. It was the wrong assessment for the wrong role at the wrong time.

Hiring assessments work when they're matched to the signal you're actually missing. A cognitive test tells you something different than a situational judgment exercise. A personality profile answers different questions than a skills test. And a 45-minute assessment battery before a candidate has even spoken to a human is a different experience than a 10-minute check after an initial screen. Most teams treat assessments as interchangeable. They're not. The type, the timing, and the length all have to match the role and the gap in your evidence.

What hiring assessments actually measure (and what they don't)

The phrase "hiring assessments" covers at least five distinct types of evaluation. Each one captures a different signal. Treating them as interchangeable is where most screening processes break.

Cognitive ability tests

These measure reasoning, problem-solving, and learning speed. They're among the most researched types of hiring assessment tests, with decades of published data behind them. They're useful for roles where someone needs to process new information quickly. They're less useful for roles where the work is routine and well-documented.

The limitation: cognitive tests tell you how someone thinks in the abstract. They don't tell you how they'll handle a specific situation on your team.

Personality assessments

Personality assessments based on validated research (like the Big Five/IPIP framework) measure traits like conscientiousness, agreeableness, and openness. They surface tendencies, not types. A candidate isn't "an introvert" or "an extrovert." They fall somewhere on a spectrum.

The signal here is alignment, not judgment. You're looking at whether someone's working style fits how your team operates. Results are conversation starters for interviews, not pass/fail gates. If you're using a pre-employment assessment tool that labels candidates as personality "types," that's a red flag about the tool, not the candidate.

Situational judgment tests

SJTs present candidates with realistic scenarios and ask how they'd respond. The employer defines what "good" looks like. There's no universal right answer. That's the point.

This makes SJTs harder to game than most other employment assessments. Every employer's preferred approach is different, so there's no cheat sheet. They're especially useful for roles where judgment matters more than technical knowledge: customer-facing positions, management roles, anything where "it depends" is the honest answer to most questions.

Skills and technical tests

Coding challenges, writing samples, data analysis exercises. These measure whether someone can do the specific work the role requires. They're the most face-valid assessment type. Candidates understand why they're being tested, which helps with completion rates.

The risk: technical tests are increasingly easy to cheat with AI. A candidate can paste your coding challenge into ChatGPT and return a passing solution in minutes. This doesn't mean skills tests are dead. It means you need to pair them with other signals that are harder to fake, or design tests that require real-time problem-solving.

Environment fit assessments

These surface whether a candidate's preferences match the reality of the role. Work schedule, pace, management style, team structure. The goal isn't to judge preferences. It's to set accurate expectations on both sides.

Research on realistic job previews suggests that when candidates know what they're walking into, early turnover drops. That's a different claim than "this assessment will predict who succeeds." It's about reducing surprises, not predicting outcomes.

How to match hiring assessments to the role

Picking the right assessment starts with a simple question: what signal are you missing?

If your screening process already includes resume review and interviews, you probably have a read on credentials and communication. What you might be missing is how someone thinks under pressure, whether their work style fits your environment, or whether they can actually do the technical work.

High-volume, low-resume-signal roles

Warehouse associates. Customer service reps. Retail staff. These roles get hundreds of candidates per posting, and resumes tell you almost nothing about who will actually show up and perform.

For high-volume hiring, keep assessments short (under 15 minutes) and focused on the signal that matters most. An environment fit check that surfaces whether someone actually wants to work nights is more useful than a cognitive battery. An SJT with three customer scenarios tells you more than a personality quiz.

Specialized or technical roles

Software engineers. Data analysts. Financial modelers. Here, the resume gives you a credential signal, but you need proof of capability.

A skills-first hiring approach works well for these roles, but the assessment has to match the actual work. A generic coding challenge tests algorithm knowledge, not whether someone can debug your codebase. Design the test around the real work, keep it to 30-60 minutes, and tell candidates exactly what you're measuring.

Management and leadership positions

Judgment matters more than technical skill. SJTs and personality assessments are more useful here than skills tests. You want to know how someone handles ambiguity, gives feedback, and prioritizes under pressure.

Pair assessments with one-way interview questions that ask candidates to walk through real decisions. The combination of how they think (assessment) and how they communicate (interview) gives you a much richer picture than either one alone.

The hiring assessment mistakes that make candidates disappear

Assessments don't just fail when they measure the wrong thing. They fail when the candidate experience is bad enough that good people walk away.

Too long, too early

One recruiter on Reddit put it this way: "We're asking them to take a 45-minute long test before they can get an interview or meet with a person or get the job offer. So I think there's a trust/commitment issue."

That's exactly right. Pre-hire assessments work best after a candidate has some investment in the process. A 10-minute check after an initial screen feels reasonable. A 45-minute battery before they've talked to anyone feels like homework from a stranger.

No context or explanation

Candidates who understand why they're being assessed complete at higher rates. "We use this to make sure your work style fits our environment" is a reason. "Please complete this assessment to proceed" is a command.

Tell candidates what you're measuring, how long it takes, and how you'll use the results. Transparency isn't just good candidate experience (and you should be surveying that experience). It also improves the quality of responses because candidates stop trying to game something when they understand there's no trick.

Irrelevant content

A personality assessment for a role where personality barely matters. A cognitive test for a position where the work is procedural. When the assessment doesn't connect to the role, candidates notice. And they leave.

The fix: map every assessment to a specific signal you can't get from the resume or interview. If you can't name the signal, you don't need the assessment.

How to use hiring assessments without adding friction

The best assessment strategy isn't about finding the most sophisticated test. It's about getting to your shortlist faster with evidence you trust.

Combine assessments with other screening signals

Assessments in isolation are limited. A personality profile without context is just a data point. But assessment data sitting next to resume qualifications and interview responses creates a candidate view that's genuinely useful.

This is where Truffle's approach fits. Truffle is a candidate screening platform that combines talent assessments, one-way video interviews, and resume screening. Use any on its own or combine all three. You can layer in a Personality assessment (based on validated Big Five research), a Situational Judgment Test that scores against your team's preferred approach, or an Environment Fit assessment that surfaces whether someone's preferences match the reality of the role.

The results sit next to interview responses and resume data in one candidate profile. They're diagnostic, not dispositive. Gaps between candidate preferences and role reality become talking points for your live interviews, not automatic disqualifiers.

Keep it short

Under 15 minutes for high-volume roles. Under 30 for specialized positions. If your hiring assessment tests take longer than that, you're testing patience, not capability.

Make timing intentional

The recruitment funnel has stages for a reason. Assessments fit best after initial qualification (resume screen or brief intro) but before the investment of a live interview. This way, candidates have enough context to take the assessment seriously, and you get the signal before you spend 30-60 minutes of interview time.

Let AI handle the analysis, not the decisions

AI can score assessment responses against your criteria consistently across hundreds of candidates. It can surface patterns and rank alignment. What it can't do is decide who to hire. That's your call.

Truffle's AI analyzes assessment results and surfaces match scores that show how closely each candidate aligns with your criteria. You see exactly why someone scored the way they did. The scoring is transparent and explainable. AI handles transcription, scoring, and ranking. You handle the conversations and judgment calls.

This matters because the talent acquisition AI space is full of tools that promise to "identify top candidates" or "predict performance." Those are overclaims. What you actually want is consistent analysis that helps you prioritize your review. AI surfaces. You decide.

Design your own process

Not every role needs every type of assessment. A customer service position might need an SJT and an environment fit check. An engineering role might need a technical test and a personality profile. A management position might need an SJT and a one-way video interview.

With Truffle, you design the screening workflow that fits the role. Resumes and assessments. Interviews and assessments. All three. The platform doesn't force a sequence. You build what makes sense at $149/month ($99/month with annual billing), and every plan includes talent assessments, AI analysis, and Candidate Shorts.

FAQ

What are the most common types of hiring assessments?

The five main types are cognitive ability tests (reasoning and problem-solving), personality assessments (working style and traits), situational judgment tests (how candidates handle realistic scenarios), skills tests (job-specific technical ability), and environment fit assessments (preference alignment with role realities). Each captures a different signal. The right choice depends on the role and what information your candidate screening process is missing.

How long should a hiring assessment take?

Under 15 minutes for high-volume roles. Under 30 minutes for specialized positions. Anything longer risks candidate drop-off without proportionally better signal. If you need more data, it's better to split assessments across stages than to front-load everything.

Do hiring assessments actually improve quality of hire?

Assessments improve hiring decisions when they're matched to the role and used as one input alongside other evidence. They don't predict success on their own. They surface alignment between what a candidate brings and what the role requires. Used well, they reduce surprises. Used poorly (wrong type, wrong stage, too long), they just reduce your candidate pool.

Can candidates cheat on hiring assessments with AI?

Skills tests and cognitive assessments are increasingly vulnerable to AI-assisted responses. Personality and environment fit assessments are harder to game because there's no universal right answer. SJTs where the employer defines the preferred approach are also resistant to gaming. The best defense is combining multiple types of candidate screening methods so no single signal carries all the weight.

When in the hiring process should you use assessments?

After initial qualification but before live interviews. The candidate should have enough context to take the assessment seriously (they've seen the role, maybe had a brief screen), and you should get the data before investing 30-60 minutes of interview time. Sending a 45-minute assessment before any human contact is the fastest way to lose good candidates.

The bigger picture

The conversation about hiring assessments is shifting. It used to be about finding the most rigorous test. Now it's about finding the right combination of signals for each role, delivered in a way that respects the candidate's time.

The companies getting this right aren't adding more tests. They're building screening workflows where resume data, interview responses, and assessment results sit in one view. Where each piece of evidence answers a question the others can't. Where the goal isn't to test more, but to learn what you need to know before the first live conversation.

That's not a technology problem. It's a design problem. And the teams that solve it first will spend less time screening and more time talking to people who are actually worth talking to.

Try Truffle instead.