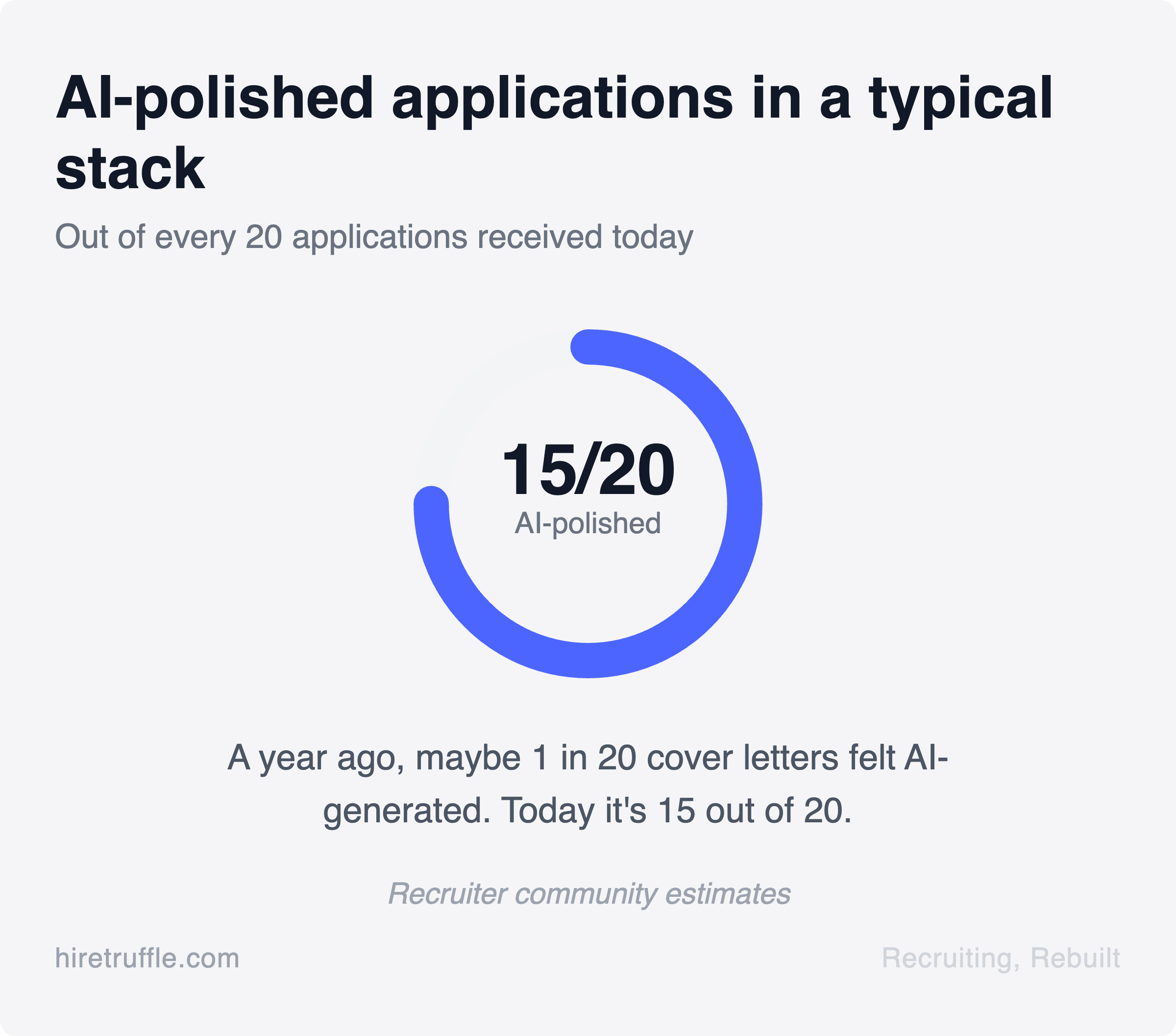

Every application in your inbox sounds the same. Perfect grammar, buzzword-rich, tailored to your exact position description with eerie precision. A year ago, maybe 1 out of 20 cover letters had that glossy, AI-polished feel. Now it’s 15 out of 20.

You’re reading the same "passionate and results-driven professional" pitch on repeat. You can’t tell who actually wrote it. This is the new reality of candidates using ChatGPT to apply for positions, and if your current strategy is "read more carefully," you’re already losing.

You can’t detect AI-written applications reliably. But you can design a screening process where it doesn’t matter. Stop playing detective. Start building a process where AI-written text doesn’t give anyone an unfair advantage.

The AI application flood is real (and it’s accelerating)

ChatGPT launched in late 2022. Within months, candidates figured out they could paste a position description into a chat window and get a polished cover letter in 30 seconds. Then came the resume rewriters, the AI interview coaches, and the browser extensions that auto-fill applications across job boards like Indeed.

The numbers are hard to pin down because no one has definitive industry data yet. But the signals are impossible to ignore. Reddit’s recruiting communities have seen a 92% year-over-year increase in posts about AI-generated applications.

Recruiters describe opening their ATS to find dozens of applications with identical phrasing. One recruiting leader put it this way: receiving over a thousand candidates, fake or not, creates a volume problem that’s nearly impossible to manage productively.

Imagine you’re an HR manager at a 120-person company. You post a customer success role on LinkedIn and a major job board. Within a week, you have 200 applications.

At least half of them read like they were written by the same person. The cover letters hit every keyword. The resumes are polished. And you have no idea which candidates actually have the skills you need.

This isn’t a future problem. It’s your Tuesday.

Why AI detection tools won’t save you

The instinct makes sense: if AI created the problem, AI should detect it. But AI text detection doesn’t work for hiring. Here’s why.

Detection tools work by measuring statistical patterns in writing. They look for things like perplexity (how predictable the next word is) and burstiness (how much sentence length varies).

The problem is that these tools were designed for academic plagiarism, not for job applications. They produce false positives on candidates who write well and false negatives on candidates who’ve learned to edit their AI output.

A candidate who pastes raw ChatGPT output into a cover letter might get flagged. But a candidate who uses ChatGPT as a starting point, then edits and adds personal details? That passes every detector on the market.

The arms race is already over. The detectors lost.

There’s also a legal gray area. Using AI detection to screen out candidates raises questions about disparate impact. Non-native English speakers, neurodivergent candidates, and people with certain disabilities may use AI writing tools as legitimate accommodations. Rejecting candidates based on an AI detector’s guess about authorship puts you on shaky legal ground.

And practically, even a 90% accurate detector fails you at scale. If you have 200 applications and the tool is wrong 10% of the time, you’re incorrectly flagging 20 candidates. Some of those might be your strongest matches.

The bottom line: Detection is a losing game. You need a different approach.

What AI-generated applications actually look like

Before moving past detection, it’s worth understanding what you’re seeing. Not to catch anyone, but to understand why your text-based screening signals are breaking down.

AI-generated applications share a few patterns. The language is consistently polished but generic. You’ll see phrases like "proven track record of driving results" and "passionate about collaborating cross-functionally" repeated across dozens of applications. The writing is grammatically perfect but says almost nothing specific.

Cover letters hit every keyword in your position description. They mirror your language back to you without adding new information. If your listing says "experience with Salesforce," the cover letter will mention Salesforce. But it won’t tell you what they actually built or how they used it.

Resumes show a similar pattern. Bullet points that sound impressive but are vaguely structured. Quantified achievements that feel plausible but unverifiable. "Increased revenue by 30% through strategic initiatives."

Which initiatives? What was the starting point? These details are missing because ChatGPT doesn’t have them.

Here’s the bigger problem: these job applications look good. As one recruiter noted, fake resumes tend to look pretty high quality. They pass keyword filters. They pass quick scans.

They waste your time only when you get deep enough into the process to realize there’s no substance behind the polish.

That’s the signal loss. The tools you’ve relied on for years (cover letters, resume bullet points, keyword matching) were already imperfect indicators of actual capability. AI just compressed the time it takes to game them from hours to seconds.

Screening methods that AI can’t fake

Here’s where the conversation shifts from problem to solution.

If AI-generated text is unreliable as a screening signal, you need screening methods that produce signals AI can’t replicate. The good news is they exist. The common thread: they require candidates to demonstrate thinking, not just describe it.

Video responses show what text hides. When a candidate records a video answer to a screening question, you see how they think on their feet. You hear their communication style. You notice whether they understand the problem or are reciting a rehearsed script.

ChatGPT can write a perfect answer to "Tell us about a time you handled a difficult customer." It can’t deliver that answer on camera with authentic examples and natural body language.

One-way video interviews are the most direct counter to AI-generated text. You set the questions. Candidates record their answers on their own time. You review the responses and focus on the people who demonstrated real understanding, not just polished phrasing.

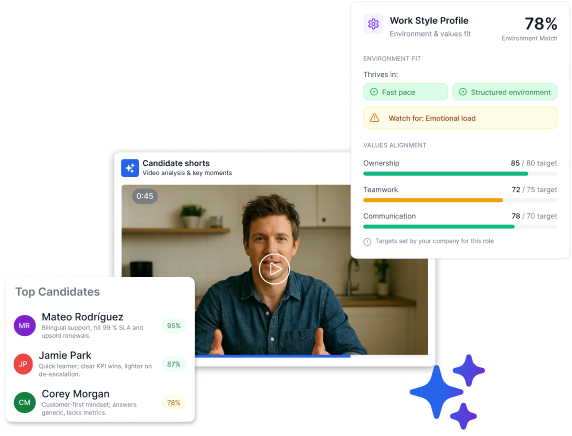

Truffle is a candidate screening platform that combines resume screening, one-way video interviews, and talent assessments. Instead of watching hours of video, Truffle’s AI analyzes each response against the criteria you’ve defined. It surfaces match scores showing how closely each candidate aligns with your requirements.

Candidate Shorts surface the most revealing moments from each interview in about 30 seconds. You can quickly see communication style, thinking process, and personality without watching every full recording.

The point isn’t that video is cheat-proof. Candidates can still prepare. They should. But preparation for a video response requires actual understanding of the topic.

You can’t paste a position description into ChatGPT and get a convincing 2-minute video response. The medium itself raises the bar.

Situational questions test thinking, not recall. Instead of asking "What’s your experience with X?" (which AI can answer flawlessly), ask "Walk us through how you’d handle this specific scenario." Give context.

Make it specific to your company.

"A customer calls saying they were charged twice and they’re upset. Our refund policy requires manager approval for anything over $50. What do you do?"

AI can generate a generic answer. But a candidate with real experience will reference specific tools, describe realistic tradeoffs, and show judgment that generic responses can’t match.

Timed components add a constraint AI can’t bypass. When a candidate has a set amount of thinking time before recording, they can’t alt-tab to ChatGPT, generate an answer, memorize it, and deliver it naturally. The time constraint creates a natural authenticity filter.

How to rebuild trust in your pipeline

Shifting from text-based screening to signal-rich screening isn’t just about catching AI use. It’s about building a pipeline where your first-pass decisions are based on real evidence.

Here’s a practical framework.

Step 1: Reduce weight on text inputs. Cover letters are optional for most positions. Resumes still matter for career history, but stop treating bullet-point phrasing as a signal of capability. Use resumes for facts (where they worked, how long, what role) and look for substance elsewhere.

Step 2: Add a video or audio screening step early. This doesn’t need to be complex. Three to five questions, recorded on the candidate’s own schedule. The goal is to hear how they think about real problems.

This single step eliminates most of the noise created by AI-generated applications. It requires candidates to actually show up.

Step 3: Ask questions AI struggles with. "What’s the last problem you solved that didn’t have an obvious answer?" "Tell us about a time your approach didn’t work and what you learned." "What’s something specific about this position that interests you, and why?"

These questions reward specificity and lived experience. Generic AI-generated answers are obvious when delivered on video.

Step 4: Evaluate consistently. The risk with manual review is that you end up with different reviewers using different standards. Apply the same criteria to every candidate. Score against the specific requirements you defined for the position, not gut reactions to presentation style.

Step 5: Focus your time on the top of the funnel. The real cost of AI-generated applications isn’t that bad candidates get through. It’s that good candidates get buried. When every application looks polished, you can’t prioritize.

Video screening and structured scoring give you a ranked shortlist. You spend your phone screens and live interviews on candidates who’ve already demonstrated alignment.

Candidates using ChatGPT to apply isn’t the real problem

The panic about candidates using ChatGPT to apply is understandable. It feels like the rules changed overnight. But the deeper truth is that text-based application signals were always fragile.

Cover letters were never a great predictor of anything except writing ability (or the ability to hire a good resume writer). Keyword matching was always gameable. Resume bullet points were always a mix of truth, exaggeration, and phrasing choices that said more about someone’s self-marketing skills than their actual capabilities.

AI didn’t break your application process. It exposed the cracks that were already there. Candidates have been optimizing their applications for filters and scanners for years. ChatGPT just made it faster and more accessible.

The teams that respond best to this shift won’t be the ones who find the cleverest detection tool. They’ll be the ones who redesign screening around signals that are harder to fake and easier to evaluate.

Video over text. Demonstrated thinking over polished phrasing. Structured criteria over gut reactions.

The era of trusting a cover letter at face value is over. What comes next is better: screening that shows you who candidates actually are, not just how well they can write about themselves.