How to screen candidates without losing your mind (or your best matches)

You have 150 candidates for one open position. Your hiring manager wants a shortlist by Friday. If you phone-screened every one of them, you'd need 30+ hours. You don't have 30 hours. You probably don't have 10.

AI summary

- Phone screens are the bottleneck in most hiring processes. They take the most time and scale the worst.

- One-way video interviews with AI analysis let you screen 100 candidates in the time it takes to phone-screen 5.

- Structured screening with consistent criteria is fairer and faster than ad-hoc resume reviews and phone calls.

A software team we watched hire a dozen engineers last spring needed 1,200 applications to get there. That’s a 1% yield, and the other 99% wasn’t a fair middle.

Most of it was what the team’s lead called “trash, largely from mass-apply AI.” They weren’t exaggerating.

A single open-source agent released a few months ago pulls 700 custom-tailored resumes in one search, and thousands of candidates are now running tools like it against every job posted on a major job board. The math of screening has changed, and the playbooks from 2024 don’t survive contact with it.

If you’ve been recruiting for more than a year, you already know the feeling. The inbox doesn’t fill up anymore. It floods. Easy-apply shoves 200 resumes into your ATS in an afternoon and maybe 20 of them have any business being in your shortlist. The 20 that deserve a real look get buried under the 180 that don’t, and the people doing the reviewing are tired by the end of the week.

The instinct, through most of last year, was to fight volume with more AI. Feed the flood into a model. Let the model rank everything. Trust the output. That instinct is now falling apart, and the playbook for 2026 looks different.

This guide to screening candidates is about what actually works, grounded in what we’re seeing across the teams we talk to, and why the 2026 version of screening is less about adding intelligence and more about redesigning the process around a single question: who deserves 30 seconds of your attention, and who deserves 30 minutes?

The candidate screening thesis that quietly broke

Through most of 2024 and 2025, the dominant pitch was “AI candidate screening.” You upload the requisition. The AI reads the resumes. The AI picks the best ones. You interview the winners. Done.

That story is collapsing in three places at once.

The first is the one everyone can feel

The resumes aren’t real. Candidates are generating them with the same class of models recruiters are using to evaluate them, and the asymmetry has reversed.

It used to take a candidate 45 minutes to tailor a resume to a job. Now it takes eight seconds. Which means the thing you’re reading was never written to you.

It was written at the model you’re going to use to read it. Everyone’s evaluating synthetic text written for other synthetic text. Nobody has met anyone yet.

The second is regulatory

This one’s been sneaking up on the industry, and the teams that aren’t watching it are about to get surprised. The UK’s Information Commissioner’s Office released guidance earlier this year that quietly killed the “human in the loop” defense most vendors have been selling since 2023.

The old pitch was: it’s fine to use an AI sifter, because a recruiter clicks the final “reject” button. The new ruling says that if the recruiter doesn’t perform a meaningful review of each flagged application, the process is still classified as a solely automated decision, with all the compliance overhead that comes with it.

The rubber-stamp workflow a lot of vendors built their product around isn’t legally safe anymore, and similar guidance is landing in EU and US jurisdictions. Local Law 144 in New York has required bias audits for automated employment decision tools since 2023, and it’s the floor, not the ceiling.

The third is the consent gap, which almost nobody is talking about

Every time a recruiter brags about “feeding 1,000 resumes into ChatGPT,” they’re describing a compliance problem.

Candidates submitted that personal data under the privacy policy of one ATS. They did not consent to being silently processed by a third-party LLM owned by a different company. GDPR, the EU AI Act, and CCPA all treat that as a problem, and the enforcement posture is tightening fast. The teams that got ahead of this in 2025 are now finding that their vendor choices are a procurement question as much as a product question.

Put those three together and you get a simple conclusion. The “AI does the screening” story doesn’t work anymore, and the teams still selling it haven’t updated their pitch.

The more honest version of the story sounds like this. Models are excellent at finding the signal in a pile of data. They’re bad at judgment calls. The teams that are winning in 2026 have stopped asking AI to make decisions and started asking it to make decisions possible.

Which leads to the frame I want you to take from this post.

In 2026, screening is an information problem, not a decision problem.

Your job is to get enough information, fast enough, to make a confident human call. The tools that help you do that are worth paying for. The applicant screening tools that try to make the call for you are the ones your hiring manager will override, your lawyer will flag, and your strongest candidates will walk away from.

What applicant volume actually looks like now

Before getting into what to do, sit with what the funnel looks like in 2026.

Most playbooks from two years ago assumed a couple hundred applicants for a mid-level role. That number is gone. The teams we work with are reporting:

- 1,200 applications to fill a dozen engineering seats. Roughly 1% conversion from applicant to hire.

- 200 applications in the first 24 hours after a role goes live on a major job board.

- Somewhere between 80% and 95% of inbound applications that do not meet one or more of the listed requirements.

- A quietly growing category of “jobs” that aren’t jobs at all. They’re resume-harvesting funnels posted by vendors and career-services companies to seed a database or train a model. Candidates spend real effort applying to roles that were never open. When they find out, they never apply anywhere again without skepticism.

The result is a signal-to-noise inversion. The problem with your pipeline isn’t that it’s empty. It’s that the valuable candidates in it are indistinguishable from the rest until you’ve spent serious time looking, and by the time you’ve looked, they’ve accepted somewhere else.

If you sit down and do the math on what “review every applicant” costs in 2026, it stops making sense almost immediately. A 30-second glance at a resume across 1,200 applicants is 10 hours of focused work. That’s before a single phone screen, a single live interview, a single hiring manager meeting. It’s also before you lose three candidates to a faster competitor, which brings me to the next piece.

Candidate screening speed is now a compensation issue

The strongest candidates you’ll screen this year are in the market for less than ten days. Many are in the market for less than three. If your process takes a week, you are, functionally, selecting from the B-tier by default, because the A-tier accepted somewhere else on Tuesday.

This isn’t a preference anymore. For a growing number of in-house recruiters, time-to-fill is tied directly to annual bonuses. Every day you spend on screening mechanics is a day that shows up in your comp review. It’s also a day your competitor is making offers. Speed in 2026 is not a nice-to-have. It’s a line item in your household budget.

So when you design your screening process for 2026, the question isn’t “how do I find the best candidate.” It’s “how do I find a good enough candidate before they accept another offer.”

Those are different problems. One of them has a perfect answer that arrives too late to matter. The other has a pretty-good answer that arrives by Thursday, which is the one you want.

The 2026 candidate screening stack, in four moves

Here’s what the better screening process looks like now. I’m going to keep this concrete and opinionated. You can disagree with any of these and still build a process that works, but I’d argue you have to disagree for reasons rather than by default.

1. Replace the resume skim with structured signal capture

The unit of screening used to be the resume. In 2026, the resume is the least reliable artifact in the pile, because models can write one in eight seconds and most candidates are doing exactly that. The signal you actually want is some combination of three things: can this person do the work, do they understand the role, and are they going to be worth working with day to day.

None of those show up cleanly on a resume, and none of them ever really did. What’s changed is that the resume used to at least filter out people who weren’t paying attention, and now it doesn’t even do that. So replace it. Move the first filter to something a candidate can’t fake in eight seconds:

- A short structured application with two or three role-specific questions.

- A brief async video interview where the candidate introduces themselves and explains why they’re a fit.

- A small scenario-based exercise that takes them 10 minutes.

The goal of this first step is not to evaluate. The goal is to make candidates do something so you have a richer artifact to evaluate later. The people who won’t spend 10 minutes applying are self-selecting out, and that’s the filter working.

2. Rank on criteria, not vibes, and write the criteria down

Once you have a richer artifact, you can rank it. This is where AI finally earns its keep in 2026, but only in the narrow way I described earlier: as a pattern-finder, not a decider.

A good ranking step in 2026 looks like this. You define the criteria before you see the first resume. Three to six specific things that matter for the role. Not “strong communicator.” Specific:

- “Can explain a technical concept to a non-technical audience.”

- “Has hands-on experience with async processes.”

- “Has managed a team of four or more.”

Then you score each candidate’s submitted artifact against those criteria, and you use the scores as a first-pass ranker.

The important word in that paragraph is ranker. You’re not letting the scores make cuts. You’re using them to decide who to review first. The scores are a triage tool. The cut still happens in your head.

This is also where the compliance layer quietly pays for itself. Under the new regulatory posture, if the AI is doing the cut and the recruiter is just rubber-stamping, you’re in trouble.

If the AI is ranking and the recruiter is documenting a real review against written criteria, you’re fine. The difference between those two workflows is how you’re using it. Pick a tool that forces you into the second workflow and resist the temptation to skip steps when the volume gets heavy.

The bonus is that written criteria also make you faster at the conversation nobody wants to have. When a hiring manager rejects fifteen finalists in a row, you now have something to point at. “We scored every candidate against the criteria you signed off on in March. These fifteen people scored above the threshold. Either the criteria are wrong or the bar is unrealistic.”

That’s a different conversation than the one you’d have with just your gut and a shared sense that things aren’t working.

3. Give 30 seconds before you give 30 minutes

The single biggest upgrade for time-starved recruiters in 2026 is the short async artifact. A short video of the candidate answering the first question. A voice note. A quick recorded walkthrough. Whatever the format, the point is the same: get something human and specific in front of you before you commit to a live call.

Phone screens aren’t going away. They’re just moving later in the funnel. Instead of using a phone screen as your first real interaction with a candidate, use it as a confirmation of what you already saw in their async. This flips the ratio. Instead of doing 30 phone screens to find three people worth a second conversation, you watch 30 shorts and do three phone screens. That’s a ten-to-one reduction in live-call volume for the same shortlist, which is the difference between a burned-out recruiter and one who still likes their job on Friday afternoon.

One note on this, because it matters. Async video done badly is worse than no video at all. The teams doing this well are not making candidates sit through a 20-minute proctored interrogation with a webcam pointed at them.

They’re asking two or three specific questions and letting candidates answer in 30 to 90 seconds each. The goal is a richer artifact and not an Oscar-winning performance. Candidates who find the format dehumanizing will drop out, and the ones who drop out for that reason are often the ones you’d most want to talk to. Design the format to feel like a low-stakes introduction and not an interrogation, and watch your completion rates track your quality.

The other note: AI interviewing that doesn’t handle accents, accessibility, and communication style differences scales bias instead of reducing it. The vendors who’ve solved this are the ones whose scoring is grounded in what the candidate said, not how they said it.

If your screening tool penalizes someone for a non-native accent or a communication style the model wasn’t trained on, you are making your pipeline worse and taking on legal risk at the same time. Ask your vendor for their bias audit, and if they don’t have one, treat that as the answer to your question.

4. Give the hiring manager evidence, not calendar invites

The last move is the one most recruiters skip, and it has the biggest payoff. The hiring manager is the other bottleneck in your screening process. They ghost you. They change the criteria mid-search. They reject unicorns because they’re intimidated. Every recruiter reading this is nodding.

The fix isn’t better meetings. It’s better evidence, handed over asynchronously. If you can hand a hiring manager three short candidate profiles and say “these are the top three, watch them before our sync tomorrow,” you get better feedback than if you hand them three resumes and book a 45-minute review call. Hiring managers hate scheduling time to review candidates. Take the scheduling out of the loop and the process moves twice as fast.

This is also where the “1 inbox, 1 tool, 1 price” consolidation pitch you’re seeing everywhere right now actually has teeth. The average recruiter we talk to is running between six and ten separate tools across a single hire: ATS, sourcer, scheduler, call app, assessment tool, video tool, notes tool, analytics. Every additional tool is a place where context gets lost and a hiring manager gets an extra tab they won’t open. Consolidation isn’t about saving money on software. It’s about making sure the hiring manager actually sees the candidate before the candidate accepts somewhere else.

What this looks like in practice

Put it together and the 2026 screening process for a typical role runs something like this:

- A candidate applies. They answer two role-specific questions and record a short video.

- Your system scores their submission against your pre-defined criteria and ranks the pile.

- You watch the top 20 or 30 shorts in sequence, take notes on the five you want to actually talk to, and schedule phone screens with those five.

- Your hiring manager watches the same five shorts before their weekly sync with you.

- You both arrive at the meeting with opinions, not questions.

- The offer goes out by Friday.

Every step has a human making the call, with written criteria and documented evidence they can defend to a skeptical hiring manager, a lawyer, a regulator, or themselves six months from now when they’re wondering whether the process is still working.

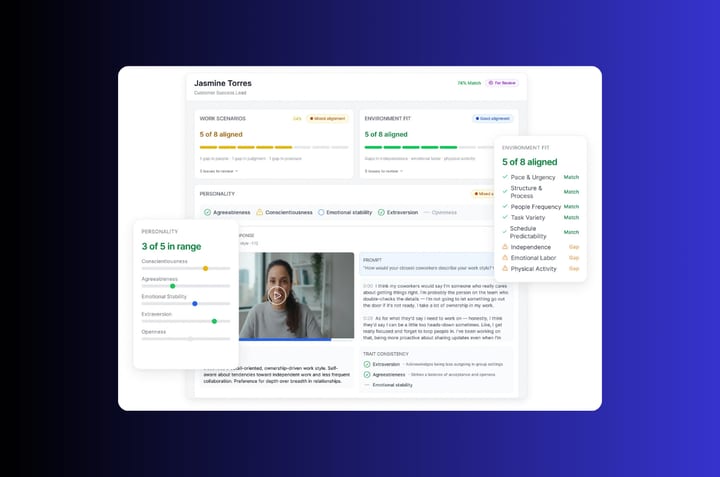

It’s the approach behind Truffle, a candidate screening platform that combines resume screening, one-way video interviews, and talent assessments, but the framework matters more than any specific tool. You can build something like this with a spreadsheet and a Loom account if you want. The tool just makes it faster, more consistent, and easier to defend. What matters is the principle: AI surfaces information, and humans make the call.

The bigger shift underneath all of this

Zoom out for a second. What’s actually happening in 2026 isn’t that screening got harder. It’s that the shape of screening changed.

- Volume stopped being a bottleneck you could muscle through and started being a permanent condition of the job.

- Resumes stopped being reliable artifacts and started being synthetic white noise.

- Speed stopped being a preference and started being tied to compensation and candidate retention.

- Compliance stopped being a back-office concern and started being a legal requirement written into local law, on two continents, with more coming.

All of those shifts point the same direction. The recruiter’s job is less about reading and more about deciding. Less about scheduling and more about judging. Less about throughput and more about the quality of the small number of conversations you actually end up having.

The research the industry’s been pushing this year is clear on which skill matters most going forward. Critical thinking is the top capability talent acquisition leaders say they need. AI literacy is a long way down the list. That isn’t a contradiction. It’s a signal. The leaders who actually watch how hiring works understand that the technical fluency matters less than knowing when to apply it and when to override it. The value isn’t in running the model. It’s in knowing which of its outputs to trust and which to ignore.

The teams that will look back on 2026 as the year their hiring actually got better aren’t the ones that bought the most AI. They’re the ones that figured out how to put the AI on the right side of the decision, and how to give their recruiters back the part of the job that made them want to do it in the first place.

That part was never data entry. It was locking arms with a good hiring manager, finding someone unexpected, and watching a real person build a real career. The screening process is just the thing that gets you there, and the one you design this year will determine how many of those moments you actually get to have.

Frequently asked questions about candidate screening

What’s the difference between pre-screening and the rest of the screening process?

Pre-screening is the filter you apply before a human spends real time on a candidate. It’s the work you do at the top of the funnel, before anyone gets a phone interview or a live screening interview. A good pre-employment screening step in 2026 includes a structured application form, a role-specific question or two, and maybe a 60-second async video. The goal isn’t to pick the qualified candidates. It’s to remove the ones who clearly aren’t, so your structured screening process has something to work with.

Everything after that is your real hiring process. Phone screening, skills assessments, final interview, reference check. The clearer the line between pre-screen and screen, the faster your time to hire.

Should you still read cover letters?

Honestly, mostly no. The cover letter used to be a signal of effort. In 2026, it’s the single easiest artifact to generate with an LLM, which means it’s the single lowest-signal artifact in the pile. If you’re still asking for one, you’re reading synthetic writing tailored to your job description by a model, and you’re using your own time to do it.

A better move: replace the cover letter field with two or three role-specific questions that ask for concrete examples from the candidate’s work history. “Tell us about a time you dealt with X” beats “Write a 400-word cover letter explaining why you’re a fit for this role.” You’ll get better signal in less space, and the answers are harder to fake cleanly.

How do you screen for cultural fit without turning it into vibes-based hiring?

Cultural fit is the most abused phrase in recruiting. Used well, it means “this person’s working style will fit the team they’re joining.” Used badly, it means “this person reminds me of me.” The difference is whether you’ve defined it before you see any candidates.

The fix is to write down your company values and the specific work behaviors that express them, then score candidates against those behaviors the same way you’d score required skills. “Gives direct feedback in writing” is a behavior you can assess. “Culture fit” is a vibe that will get your hiring decisions challenged by legal on a Tuesday. Same goes for work ethic. If you can’t describe what it looks like in action, don’t use it as a screening criterion.

When should you run background checks and reference checks?

Late, and only once. Background checks and reference checks are expensive, slow, and legally sensitive, which is why you run them at the end of the process, not the middle. The sequence most teams we talk to use: async pre-screen, ranking, phone interview, hiring manager interview, verbal offer pending checks, then the actual pre-employment screening run (criminal history where allowed, employment and education verification, professional references).

Running reference checks on every shortlisted candidate burns your political capital with their references and wastes hours you don’t have. Run them on the finalist, and run them hard. Ask the hard questions. Verify the dates on the resume against the dates they give you. That’s where work history checks actually catch things.

Is social media screening worth the legal risk?

Usually not. Social media screening sounds cheap and fast, but it exposes you to discrimination claims based on protected class information you can’t “unsee” once you’ve looked at a profile. Age, religion, pregnancy status, disability status. Once you’ve viewed it, the candidate’s lawyer can argue it influenced your decision, whether it did or not.

If you do it, do it through a third party that filters out protected-class information and only surfaces job-relevant findings. Document what you looked at and why. Never do it yourself on a Tuesday afternoon because you were curious. The “free” version is the one that ends up in court.

What’s the right way to use an ATS and AI-powered screening tools in 2026?

Use them as triage, not judgment. A modern applicant tracking system can rank resumes against a job description, flag employment gaps, run Boolean searches against your database, and route candidates through pre-defined stages. All of that is useful. What you should not do is let the ATS auto-reject based on a score. That’s the workflow regulators are now classifying as an automated decision, and that’s the workflow that’s going to cost you.

The right use of an AI-powered screening assistant is to surface the top 10% so you can look at them first, surface the bottom 10% so you can confirm the rejection, and leave the middle 80% for a real human review. The tool runs triage. The recruiter runs judgment. If a vendor’s demo makes it look like the tool is doing both, that’s your signal to ask harder questions about how it handles bias audits.

What should you do about employment gaps and nonlinear career progression?

Stop treating them as a red flag. The last six years have produced more legitimate employment gaps than the previous twenty combined, for reasons that have nothing to do with candidate quality. Layoffs, caregiving, long COVID, sabbaticals, failed startups, career changes. A clean linear resume isn’t a signal of a better hire. It’s a signal of someone whose luck held.

The right screening move is to ask about the gap directly in the async or the phone interview, in a neutral tone, and listen to the answer. If the answer is coherent and the educational background and work history check out on reference, the gap itself is not the story. What the candidate did during the gap often is.

Where do skills assessments, personality tests, and take-home assignments actually fit?

Each one solves a different problem, and using the wrong one is worse than using none.

Skills assessments and aptitude tests are for roles where you need to verify a specific capability the resume can’t prove. Writing, coding, data analysis, language fluency. Use them when the cost of a bad hire is high and the skill is testable in under an hour. Avoid them for soft skills, which they measure badly.

Take-home assignments and paid trial projects are for senior roles where you want to see real work. They’re expensive in recruiter hours and candidate hours, so reserve them for finalists. Pay for them. A paid employment trial that takes five hours and costs $250 is a cheap way to avoid a bad hire that costs $50,000 in ramp time.

Personality assessments are the most abused category. They’re useful for building context about how someone prefers to work, not for making a go/no-go decision. Never use them as a cut. Never use them without a validated instrument. If your vendor can’t name the study that validated their test, don’t use the test.

How should you handle salary expectations during screening?

Early, in writing, and without games. The fastest way to waste three weeks of screening is to get to the offer stage and discover you’re $30,000 apart. Ask for a salary expectation range in the application form, publish your own range in the job description, and move the candidates whose expectations don’t fit out of the funnel before anyone spends a phone interview on them.

This also dramatically improves candidate experience. The people being wasted most by the current screening process are the ones who go through six rounds to find out the pay is half what they need. Telling them upfront is not scaring them off. It’s respecting their time, which is also yours.

How do you evaluate communication skills from a 30-second async video?

You don’t evaluate them from the video alone. You use the video as one signal in a richer picture. What you can tell from 30 seconds: whether the candidate can express a clear thought under mild pressure, whether they understood the question, and whether their written answer matches the way they speak. That last one is the highest-signal test in the async format, because it catches candidates whose resume was written by a model with a much higher vocabulary than they have.

What you cannot reliably tell from 30 seconds: body language, depth of experience, or anything about how they’d behave on day 47 of a hard project. Don’t score for those. Score the video as “do I want to spend 15 more minutes with this person” and leave the rest for the phone interview.

What does a bad hire actually cost, and how do you avoid one?

The finance department’s number is usually somewhere between 30% and 200% of the role’s annual salary, depending on how senior the role is and how long the person stays. The real number is higher than that, because it doesn’t count the hit to the team’s morale, the work that didn’t get done while you were recruiting the replacement, or the candidates you passed on during the first search who are no longer available.

The way to avoid a bad hire is not to screen harder. It’s to screen for the right things and make well-informed decisions on each one. Written criteria. Structured evidence. Async artifacts the whole hiring team can review. A documented reason for every yes and every no. When hiring efficiency improves, it’s almost never because the process got faster. It’s because the process stopped second-guessing itself, and that only happens when the evidence is clear enough to trust.