Structured vs. unstructured interviews: what the research says and what most teams still get wrong

Structured interviews outperform unstructured ones on nearly every measurable axis — predictive validity, fairness, legal defensibility. Most teams know this and still run unstructured. Here's why, and what a structured loop actually looks like.

Every recruiting team I’ve worked with knows structured interviews outperform unstructured ones. Most still run unstructured interviews anyway. The gap between what teams know about interview methodology and what they actually do is one of the largest evidence-practice gaps in any operational discipline, and it costs the industry a measurable amount in mis-hires every year.

This post is the version of the structured-vs-unstructured comparison that goes past the textbook summary. It covers what the research actually says, what structure does and doesn’t mean, why teams keep defaulting to unstructured even when they know better, and what a 2026 structured loop looks like when the tools handle the parts that used to make structure expensive.

What the research actually shows

The reference paper most people end up citing is Schmidt and Hunter’s 1998 meta-analysis in Psychological Bulletin, updated by Schmidt, Oh, and Shaffer in 2016. They compiled validity coefficients for 19 hiring methods, comparing each method’s correlation with subsequent job performance.

The headline numbers from the 2016 update:

| Method | Validity coefficient |

|---|---|

| GMA (cognitive ability) tests + structured interview | 0.65 |

| Structured employment interview | 0.51 |

| Work sample tests | 0.48 |

| Unstructured employment interview | 0.38 |

| Integrity tests | 0.31 |

| Reference checks | 0.26 |

| Years of education | 0.10 |

| Years of work experience | 0.06 |

A validity coefficient is the correlation between a hiring signal and later performance. Higher is better; the theoretical max is 1.0, and in practice anything over 0.30 is meaningful. Structured interviews at 0.51 are doing real work. Unstructured at 0.38 are doing modest work. The gap between them is roughly 30%, and it compounds across the hires you make in a quarter.

A separate body of research, going back to Robert Guion in the 1960s and through McDaniel, Whetzel, Schmidt & Maurer (1994), has consistently found the same pattern: structure correlates with predictive validity, and the more structured an interview is (same questions, same scoring, same order, evaluator independence), the more closely the result correlates with later job performance.

The methodology doesn’t depend on whether the interviewer is a recruiter or a hiring manager, whether the role is technical or non-technical, whether the company is a startup or a Fortune 500. The effect shows up across industries, levels, and decades.

What “structure” actually means

This is where most teams get confused. “Structured” doesn’t mean formal, robotic, or scripted. It means a specific set of design decisions about the interview itself.

A structured interview has four properties:

- Same questions. Every candidate for the same role gets the same core question set. Follow-ups can vary; the core questions don’t.

- Same order. The questions are asked in the same sequence across candidates. Order effects are real — asking about a strength after a weakness produces different answers than asking about a weakness after a strength.

- Same rubric. Each question has a defined evaluation criterion and a scoring scale (typically 1-5 behavioral anchored). The interviewer scores against the rubric, not against an impression of the candidate.

- Evaluator independence. Each interviewer scores their portion of the interview independently before any group debrief. Scores get submitted to a shared scorecard before the team talks about the candidate.

Notice what’s not on the list. Tone, warmth, conversational flow, follow-up questions, the rapport at the start of the interview — none of those are constrained. A structured interview can be warm. It can be conversational. It can follow the candidate’s interesting answers with deeper probes. The constraint is on the inputs (same questions and rubric) and the evaluation (independent scoring against the rubric), not on the experience of the conversation.

Most teams that think they’re running structured interviews are running standardized openings followed by improvised everything else. “Tell me about a time you handled a difficult stakeholder” gets asked at the start of every interview, and then the next 40 minutes are improvised on whatever the candidate brings up. That’s an unstructured interview with a structured opening line. The research validity numbers don’t apply.

What unstructured interviews actually optimize for

Unstructured interviews aren’t useless — they’re just not measuring what most teams think they’re measuring.

What an unstructured interview does measure well: how comfortable the candidate is in a freeform conversation, how quickly they think on their feet, how charming or polished they come across, how their personality reads to the specific interviewer in the room. Those are real signals. They correlate with some aspects of job performance — particularly for roles where verbal fluency and social comfort matter.

What an unstructured interview does not measure well: anything specific about the candidate’s ability to do the actual job. The conversation drifts toward whatever the interviewer is curious about, the interviewer evaluates against an internal impression rather than a rubric, and the impression conflates “did I enjoy talking to this person” with “could this person do the role.” Those two questions have different answers about 40% of the time, and the unstructured interview can’t tell them apart.

The result is hires who interview well and underperform at the job, plus hires the team rejected because they didn’t interview well but would have outperformed. Both kinds of error show up at scale. Both are invisible to teams who don’t measure quality-of-hire against interview scores.

Why teams keep running unstructured interviews

Three reasons, in roughly the order they bite:

It’s faster to set up. Structured interviews require building question sets, rubrics, and scorecards before you can run them. That’s 4-6 hours of upfront work per role. Most hiring managers don’t want to do it. Most recruiters don’t have time to push them.

Interviewers feel more confident in unstructured conversations. Every interviewer is convinced they’re an unusually good reader of people, an unusually good question-improvisor, and an unusually accurate evaluator of candidates. The dunning-kruger pattern shows up clearly in interview research: less-experienced interviewers tend to overestimate their own validity, and the certainty of their reads doesn’t correlate with their actual hit rate. Structured interviews feel rigid because they remove the interviewer’s freedom to “go with their gut.” The gut is the thing the research says shouldn’t be trusted.

Structure feels rigid to the candidate. This is the smallest of the three reasons but the most commonly cited. The fear is that asking the same questions in the same order will feel mechanical and the candidate will be put off. In practice candidates can’t tell the difference between an interview with a structured question set and an interview without one, as long as the interviewer runs the conversation skillfully. The interviewer is what feels human or robotic. The question set has nothing to do with it.

What changed in 2026

The structured-vs-unstructured comparison was an evidence-practice gap for thirty years partly because building a structured interview was expensive. You needed a job analysis, a question set, a behavioral-anchored rubric, calibrated interviewers, and a tool to capture independent scores before the debrief. Most small and mid-market teams didn’t have any of that infrastructure.

Three things changed.

Screening interviews are async by default. A one-way interview asks every candidate the same questions in the same order, recorded individually. The “same questions, same order” requirement of structured methodology is automatic — there’s no live interviewer to drift off-script. The screening layer of the funnel is now structured whether the team designed it that way or not.

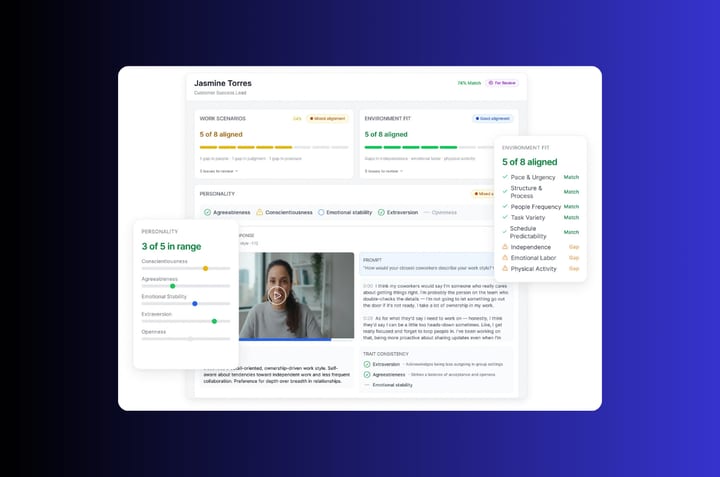

Scorecards run on AI-assisted evaluation. Once the screening is recorded, AI Match scores each candidate’s response against the criteria the recruiter set during intake. The independent-evaluator-score-before-debrief requirement happens at the screening stage automatically, and the live round inherits a pre-scored shortlist instead of starting from zero.

Question sets and rubrics are reusable. A question set built once for a Customer Success Lead role works for every CSL hire after the first one. Rubrics calibrated against the team’s actual best performers get sharper with every cycle. The 4-6 hours of upfront work amortizes across many hires instead of repeating for each one.

The cost side of “structured interviews are expensive to run” has mostly collapsed. The evidence-practice gap is now mostly cultural — interviewers who still believe their unstructured intuition is more accurate than a rubric.

A working structured loop, end to end

Here’s what a structured interview process looks like when the tooling carries the structure:

-

Intake. Hiring manager and recruiter define 3-5 must-have criteria and the questions that test each one. 30-45 minutes of work, captured in a structured brief.

-

Screening interview. Every candidate records a one-way interview answering the same 4-6 questions in the same order. AI Match scores each response against the criteria. The recruiter reviews ranked results and produces a shortlist of 5-8.

-

Hiring manager shortlist review. The hiring manager watches Candidate Shorts — 30-second condensed clips of each shortlisted candidate. They pick 3 for live conversation, based on the screening evidence and their own scorecard reactions to the recorded answers.

-

Live structured interview. Each candidate gets the same 5-7 live questions in the same order from a small panel (one or two interviewers). Each interviewer scores against the rubric independently before they talk to each other.

-

Structured debrief. Scorecards go on the shared sheet. The team discusses the deltas — where panelists scored the same candidate differently and why — instead of trading impressions. The decision runs from the scorecards.

-

Reference checks and offer. The references answer a structured set of questions tied back to the criteria. The offer rationale points to specific evidence, not to a general impression.

Every step is structured. None of it feels mechanical to the candidate because the warmth of the conversation comes from the interviewers, not from the question set. The candidate experiences a competent process. The team experiences a defensible decision. The validity numbers from the research show up because the methodology actually got run.

The objection that’s worth taking seriously

The strongest version of the case for unstructured interviews goes like this: structure can’t capture the subtle signals that experienced interviewers actually use. Pacing, hesitation, the way a candidate handles an unexpected question, what they choose to volunteer when given space. Those signals are real, they’re predictive, and they’re hard to encode in a rubric.

This is the version of the objection worth engaging. The answer isn’t to throw out structure. It’s that structure constrains the interviewer’s evaluation, not their observation. A structured interview can absolutely include “open follow-up time” where the interviewer probes whatever they find interesting. The constraint is on the scoring: those probes don’t go into the rubric, because the rubric is calibrated against the standardized questions. The improvised follow-ups can show up in the debrief as qualitative color, but they don’t drive the score.

In practice this means a structured interview has space for the experienced interviewer’s instincts. It just doesn’t let those instincts overwrite the rubric-based score. The hire decision uses the rubric score as the primary input and the qualitative color as a secondary input that can break ties but can’t override the structured assessment. That’s the version of structured interviewing that captures the validity research and the experienced-interviewer intuition at the same time.

If you’re running unstructured today

Two upgrades are worth doing, in order:

First, move the screening layer to async. Same five questions for every candidate, recorded, scored against the criteria. The screening stage is the easiest place to add structure and the place where structure has the highest leverage (it filters out 60-80% of your candidates).

Second, build a scorecard for the live round. Same five questions, same rubric, scored independently before the debrief. This is the harder part because it changes interviewer behavior. But the screening structure makes the live round easier to standardize — the live questions can probe deeper on the criteria the async screening already filtered for.

The 30% validity gap from the Schmidt and Hunter data shows up in the hires you make over the next four quarters, not in the next interview. The pattern is small per-hire and large in aggregate. Teams that close it report better quality-of-hire trends, lower 90-day attrition, and more defensible decisions when a hire doesn’t work out. The methodology is decades old. The 2026 version of it just got cheap enough to run by default.

Frequently asked questions about structured vs. unstructured interviews

What is a structured interview?

A structured interview uses the same set of pre-defined questions, asked in the same order, evaluated against the same rubric across every candidate for a role. Each interviewer scores independently against the rubric before the debrief, not based on impressions formed during the conversation. The structure constrains the questions and the evaluation, not the warmth or conversational style of the interaction.

What is an unstructured interview?

An unstructured interview is freeform. The interviewer improvises questions based on the candidate’s responses and forms an overall impression of fit rather than scoring against pre-defined criteria. Most casual or “culture fit” interviews are unstructured, and most teams that think they’re running structured processes are actually running unstructured ones with a standardized opening question.

Are structured interviews really more effective than unstructured ones?

Yes, by a meaningful margin. Schmidt and Hunter’s meta-analyses (1998, updated 2016) give structured interviews a predictive validity coefficient of about 0.51 for job performance, versus 0.38 for unstructured. That’s roughly a 30% gap in the ability of the interview to predict whether a candidate will perform well in the role. The effect has held up across decades of research, multiple industries, different validity criteria, and varied performance metrics.

Why do most companies still use unstructured interviews?

Three main reasons. Structured interviews require upfront work (4-6 hours per role to build question sets, rubrics, and calibrate interviewers), which most teams don’t budget for. Interviewers tend to overestimate their own ability to read candidates in a freeform conversation, so structured methodology feels like it’s constraining their best skill when it’s actually constraining their biggest source of error. And structure feels rigid to the team running it, even though candidates can’t tell the difference between a structured and an unstructured interview when the interviewer is competent at running the conversation.

How do I run a structured interview without making it feel mechanical?

Build the question set and rubric once, then run the conversation naturally inside it. Same core questions in the same order for every candidate, but the interviewer can vary warmth, follow-ups, and conversational tone. The candidate doesn’t experience the structure — they experience a competent interviewer who has done their homework. The structure is a constraint on the interviewer’s evaluation, not on the candidate’s experience of the conversation.