20 problem-solving interview questions and what good answers actually sound like

Most problem-solving questions test whether the candidate can sound smart under pressure. The ones that matter test how they think when the answer isn't already in their head.

Problem-solving is the most over-prepped category in interviewing. Candidates have STAR-format answers ready for every “tell me about a time you solved a problem” question. They’ve watched the LinkedIn videos. They know the rhythm. Most interviewers nod at the structure of the answer and miss the thinking inside it. The candidate sounds competent. The hire doesn’t always work out.

The fix is to ask questions the candidate hasn’t rehearsed. Real problems from real roles, with constraints they can’t optimize for in advance. Then score the reasoning process visible in the answer — not the conclusion they reach.

This post is 20 questions in that style, what good answers sound like, and a scoring rubric that focuses on process. Use the ones that match the role.

What you’re actually trying to evaluate

Problem-solving as an interview signal breaks into five sub-skills:

- Clarifying the problem. Does the candidate restate it in their own terms, ask what’s known and unknown, and confirm what success looks like?

- Breaking it down. Do they decompose the problem into smaller, tractable pieces, or do they try to solve the whole thing at once?

- Surfacing constraints. Do they ask about time, budget, data, dependencies, and politics, or do they assume away the hard part?

- Generating options. Do they consider 2-3 paths and pick between them, or do they jump to the first idea?

- Choosing. Do they make a call with reasoning, or do they hedge into “it depends” without committing?

The questions below probe these five sub-skills from different angles. Mix and match to the role.

Category 1: Open-ended scenarios with limited information

These work best as a live exercise where the candidate can ask questions back. Plan to spend 8-12 minutes per question.

1. We launched a feature 6 weeks ago and engagement looks flat. Walk me through how you’d figure out what’s going on.

What good sounds like: Asks about the engagement metric definition before diving in. Asks how the feature was launched (gradual rollout vs. all-at-once). Asks if “flat” means flat versus baseline or flat versus expectation. Names 3-4 hypotheses (discovery, value, friction, segment mix) and how to test each cheaply.

What weak sounds like: Jumps to a solution (“I’d A/B test it”). Doesn’t clarify what flat means. Assumes the metric is the right metric.

2. A customer is threatening to cancel a $200K contract because of a problem we caused. You have 30 minutes before the call. How do you prepare?

What good sounds like: Asks who the customer-side contact is and what relationship history exists. Asks about the team-side facts. Names what they’d want to know before the call (root cause, what’s been fixed, what hasn’t, what we’re offering). Considers what not to commit to in the call.

What weak sounds like: Goes straight to “I’d apologize and offer a discount.” No prep work. No information-gathering phase.

3. Our team’s hitting our quarterly goals on paper but morale’s low. Where do you start?

What good sounds like: Distinguishes “hitting goals” from “hitting the right goals.” Asks what the goal-setting process was. Asks about workload distribution, recognition patterns, and turnover risk. Considers whether the goals are the cause or a coincidence.

What weak sounds like: “I’d run a survey.” Doesn’t engage with the diagnostic question first.

4. A long-running project is two months behind schedule with no clear culprit. What’s your first move?

What good sounds like: Differentiates “behind on time” from “behind on outcomes.” Asks about the project’s original assumptions and which ones broke. Considers whether to cut scope, extend, or kill. Asks who has the authority to make each of those calls.

What weak sounds like: Goes straight to “find the bottleneck.” Doesn’t consider whether the schedule was real to begin with.

Category 2: Behavioral (past-tense) problem-solving

These are the highest-validity problem-solving questions. They surface what the candidate actually did, not what they think they would do.

5. Tell me about a problem you solved that didn’t have a documented playbook.

What good sounds like: Picks a real problem, not a small one. Walks through the approach: what they knew, what they had to figure out, where they got stuck, how they got unstuck. Acknowledges what they’d do differently.

What weak sounds like: Picks a problem with a playbook. Skips the “got stuck” part. Tells a clean linear narrative that doesn’t match how real problems get solved.

6. Walk me through a decision you made with incomplete information that turned out to be wrong.

What good sounds like: Real decision, real consequence. Owns the call without externalizing blame to the information gap. Names what they learned. Points to a later decision where they applied the learning.

What weak sounds like: Small-stakes decision. Blames the information gap. No second-order reflection.

7. What’s the hardest debug you’ve ever done? (Pick the format — code bug, customer relationship, process breakdown, whatever.)

What good sounds like: Picks a real hard one. Describes the dead-end paths, not just the path that worked. Shows how they updated their mental model as evidence came in.

What weak sounds like: Picks something easy and dresses it up. No dead-end paths described.

8. Tell me about a time your initial diagnosis of a problem was wrong. How did you figure out you were wrong, and what did you do?

What good sounds like: Specific moment of realization. Doesn’t make the wrong diagnosis sound dumb in retrospect — shows why it was reasonable at the time. Names what evidence flipped them.

What weak sounds like: No specific moment. Wrong diagnosis sounds dumb (which usually means the story has been rewritten).

Category 3: Prioritization under constraint

These test the “surfacing constraints” and “choosing” sub-skills. Often best as a quick scenario with a forced trade-off.

9. You have 5 days of engineering time and 4 high-priority items. Each one takes 2-3 days. How do you choose?

What good sounds like: Asks who set the priorities and against what. Asks about reversibility — which items can wait, which can’t. Asks about dependencies. Asks if anything can be cut in scope rather than skipped entirely.

What weak sounds like: “I’d talk to the team.” Doesn’t engage with the trade-off.

10. A peer is asking you to take on work that’s not yours. Saying yes hurts your project; saying no hurts theirs. Walk me through your call.

What good sounds like: Names what they’d want to know before deciding (criticality on their side, time cost on yours, alternatives they’ve already tried). Considers whether to escalate, whether to negotiate scope, whether to time-box. Doesn’t default to either “always yes” or “always no.”

What weak sounds like: Defaults to “I’d say yes if I could fit it.” No scoping conversation.

11. You inherit a team with three open priorities and no clarity on which matters most. What do you do in week one?

What good sounds like: Doesn’t pick a priority in week one. Spends week one talking to stakeholders, understanding context, then naming a priority in week two with the reasoning shown. Acknowledges they’re going to disappoint somebody.

What weak sounds like: Picks a priority on day three. Doesn’t acknowledge the political cost.

12. We can hire one of three roles this quarter — sales, engineering, or customer success. Argue for one.

What good sounds like: Asks what’s true about the business (growth rate, attrition, pipeline health) before arguing. Once they have context, makes a clean case for one. Acknowledges what they’re giving up.

What weak sounds like: Argues for one without asking. Doesn’t acknowledge what’s being given up.

Category 4: Recovery and adjustment

These test how the candidate handles getting it wrong in the room. Real on-the-job problem-solving involves a lot of being wrong; if the interview format doesn’t surface that, it’s missing the trait.

13. (Mid-question redirect:) Hmm, that’s not quite what I was asking. Let me reframe. [Reframe the question.] How would your answer change?

What good sounds like: Pauses, restates the reframed question, asks a clarifying question if needed, then gives a genuinely different answer that engages with the reframe.

What weak sounds like: Defends the original answer. Or repeats the original answer with new packaging.

14. (After a strong answer:) That was a good answer. What’s the weakness in your own reasoning there?

What good sounds like: Names a real weakness — an assumption they made, a constraint they ignored, a scenario where their approach breaks.

What weak sounds like: “I don’t think there is one.” Or names a generic weakness (“I could have communicated it better”).

15. (After they propose a solution:) Imagine that solution fails. What’s the most likely reason it failed?

What good sounds like: Picks a specific failure mode tied to the proposal. Names what they’d watch for in the early signal.

What weak sounds like: Generic failure mode (“the team didn’t buy in”). No specific watch-fors.

16. What’s something you got wrong in this interview so far, and what would you change about your answer?

What good sounds like: Picks something real, names what they’d change. Bonus for catching a specific weakness in an earlier answer.

What weak sounds like: “Nothing comes to mind.” Or names something irrelevant.

Category 5: Calibration and self-awareness

These wrap the interview. They test whether the candidate can talk about their own problem-solving honestly.

17. What kind of problems are you best at? What kind do you struggle with?

What good sounds like: Specific types of problems on each side. Self-aware about the trade-off.

What weak sounds like: “I’m a generalist.” Or names a fake weakness (“I work too hard”).

18. When have you brought in someone else to solve a problem you couldn’t solve alone? How did you decide to ask?

What good sounds like: Specific moment, specific person, specific decision rule (time-spent threshold, “this is outside my expertise,” stuck pattern).

What weak sounds like: “I always ask for help.” No decision rule.

19. What’s the most useful thing someone has taught you about how to think?

What good sounds like: Real lesson, real teacher (or source), real application. Bonus for showing how their thinking changed.

What weak sounds like: Cliché (“always question your assumptions”). No application.

20. If we hired you and 3 months in you were underperforming, what would be the most likely reason?

What good sounds like: Honest. Names a real risk that’s specific to them (not “I don’t know the codebase yet” — closer to “I tend to optimize for technical depth over speed of decision, which might not fit if the bar is rapid iteration”).

What weak sounds like: “I always perform.” Or names a risk that’s external (ramping, onboarding).

The scoring rubric

Score the process visible in the answer, not the conclusion. A candidate who reaches the wrong answer with clean reasoning is a stronger hire than one who reaches the right answer via a memorized template.

For each question, 1-5 on each of these:

| Sub-skill | Strong (4-5) | Weak (1-2) |

|---|---|---|

| Clarification | Restates the problem, asks what’s known/unknown, confirms success criteria | Jumps to a solution; doesn’t engage with the framing |

| Decomposition | Breaks into 2-3 tractable parts | Tries to solve as one monolith |

| Constraint awareness | Surfaces time, budget, data, dependency, political constraints | Assumes away the hard part |

| Option generation | Considers 2-3 paths, names the trade-offs | First idea is the only idea |

| Decision | Makes a call with reasoning; acknowledges what they’re giving up | Hedges into “it depends” without committing |

Average the five scores per question; average across questions for an overall problem-solving score. A 3+ average is the threshold to advance; a 4+ average is the threshold for senior roles.

Where async screening fits

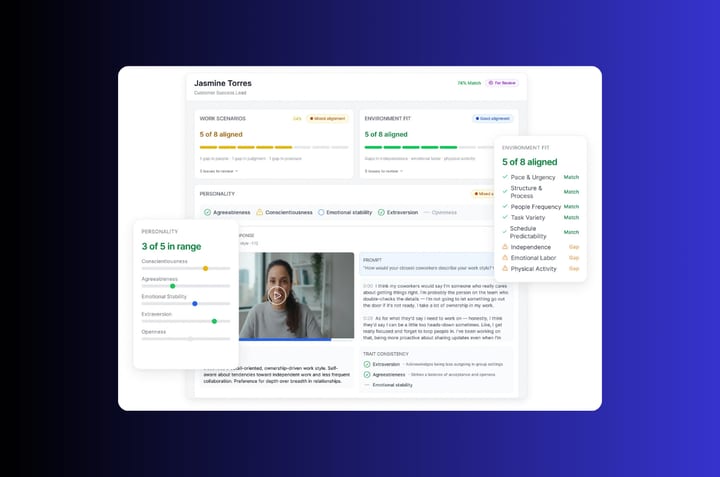

The behavioral problem-solving questions (5-8) work very well in async one-way interviews. Each candidate answers the same questions in the same order; AI Match scores the responses against the criteria above. The recruiter inherits a ranked shortlist with the reasoning process visible in Candidate Shorts.

The scenario and reframe questions (1-4, 13-15) work better live because the back-and-forth is part of the signal. Keep these in the panel or hiring manager interview after the async screen.

The split lets you evaluate problem-solving at two stages: the async screen filters out candidates whose past problem-solving doesn’t hold up, and the live round tests how their reasoning runs in real time. Both stages score against the same rubric, so the data accumulates instead of fragmenting.

Frequently asked questions about problem-solving interview questions

What are good problem-solving interview questions?

Good problem-solving questions test how the candidate thinks when the answer isn’t already in their head, rather than whether they can recite a memorized framework. The strongest formats are unfamiliar scenarios with limited information, prioritization under constraints, and reflection on a real decision that didn’t go well. The point is to see the reasoning process visible in the answer, not to get to a “correct” answer the interviewer had in mind.

How do you assess problem-solving skills in an interview?

Score the process visible in the answer, not the conclusion. Use a 1-5 rubric on five things: how the candidate clarifies the problem, breaks it down into tractable pieces, surfaces constraints, generates options, and chooses between them with reasoning. A candidate who reaches a wrong answer with clean reasoning is a stronger hire than one who reaches the right answer via a memorized template.

What are behavioral problem-solving questions?

Behavioral problem-solving questions ask about real past situations where the candidate had to solve a problem with incomplete information — versus hypothetical “how would you handle X” questions. Behavioral questions surface what the candidate actually did. Hypothetical questions surface what they think they would do. Both are useful, but behavioral questions correlate more strongly with future on-the-job performance, especially when paired with follow-ups that probe what they learned.

How do you ask about problem-solving without using a brainteaser?

Skip brainteasers entirely. They mostly test pattern-matching to puzzles the candidate may have seen before, plus comfort performing under pressure — neither of which correlates with on-the-job problem-solving. Replace with a concrete problem from a role you’ve actually worked: “we hit this issue last quarter, here’s what we knew, walk me through how you’d approach it.” Real problems beat clever ones, and they’re harder to over-prep for because the constraints are specific to your context.

What’s a red flag in problem-solving interview answers?

Three patterns. First: jumping straight to a solution without restating or clarifying the problem. Second: confident answers with no acknowledgment of trade-offs or unknowns. Third: blaming circumstance, the team, or available information when describing a past decision that didn’t go well. All three signal that the candidate is performing certainty rather than thinking, and the underlying problem-solving rarely matches the confidence on display.