20 panel interview questions that actually triangulate (and how to score them)

Most panel interview questions are a single interviewer's question asked in a louder room. The questions that work in a panel format do something only a panel can — generate signal across multiple criteria at once.

Most panel interview question lists you’ll find online are recycled solo-interview questions arranged into a longer list. “Tell me about a time you handled a difficult stakeholder,” then five panelists nod, and somebody types it into a Google Doc. That’s not a panel interview. That’s a slightly more expensive solo interview with witnesses.

The version of a panel interview worth running uses the one thing a panel format can do that a solo interview can’t: evaluate multiple distinct criteria in parallel from the right people. The engineer scores technical depth. The PM scores stakeholder communication. The security lead scores judgment under ambiguity. Three panelists, three scores, three criteria. The triangulation is real because the panelists aren’t evaluating the same thing.

This post is the 20 questions that produce that kind of triangulation — organized by criterion, mapped to the panelist who should ask them, and scored with a 1-5 rubric each panelist submits privately before the debrief.

The structure: who asks what

A 45-60 minute panel for a professional role usually fits 5-8 core questions plus follow-ups. Distribute them across the panel by criterion. Three panelists, two questions each, plus shared opening and closing rapport. The candidate gets 6 minutes per question; you get six criterion-tied data points.

The questions below are grouped by the four most common panel criteria. Use the ones that match your role.

Category 1: Technical depth and execution

Asked by the panelist most qualified to evaluate technical work. For an engineering hire that’s another engineer; for a marketing hire it’s a senior marketing leader; for a sales hire it’s a sales leader or sales engineer.

1. Walk me through a recent project you led where the technical decisions were yours to make. What were the trade-offs you weighed?

What good sounds like: Names a specific project. Lists 2-3 trade-offs and which side they chose for each. References constraints (budget, timeline, team skill). Mentions one decision they’d make differently in hindsight.

What weak sounds like: Generic descriptions (“we built a scalable system”). No specific trade-offs. Credits “the team” without showing their own decision-making.

2. What’s a technical concept you’ve learned in the last 6 months, and what made you go learn it?

What good sounds like: Specific concept, specific source (paper, course, person they learned from), specific applied use case. Shows current learning posture.

What weak sounds like: Vague (“I’ve been getting into AI”). No applied use. Can’t explain the concept past surface level.

3. Describe a problem you solved that didn’t have a documented playbook.

What good sounds like: Frames the problem clearly. Walks through their approach. Acknowledges where they got stuck and how they got unstuck. Shows what they learned.

What weak sounds like: Picks a problem that did have a playbook. Skips the “got stuck” part. Presents a clean linear narrative that doesn’t match how real problems get solved.

4. What’s the most complex system or process you’ve worked with? How did you build a mental model of it?

What good sounds like: Picks a real system. Describes the parts that were hardest to understand and the strategies they used (reading code, drawing diagrams, talking to someone who built it). Shows comfort with not knowing.

What weak sounds like: Picks a system that’s complex in name but simple in their day-to-day. Describes “reading the docs” as their mental-model strategy.

Category 2: Stakeholder communication

Asked by the panelist who’ll work with this person across team boundaries — typically a PM, a partner team lead, or a hiring manager from a different function.

5. Tell me about a time you had to explain a complex decision to a non-technical stakeholder. What changed about how you explained it compared to how you’d explain it to a peer?

What good sounds like: Names the analogy or framing they used. Shows awareness of what was hard to translate. Mentions a moment they realized the explanation wasn’t landing and adjusted.

What weak sounds like: “I just simplified the language.” No awareness of where the gap actually was.

6. Walk me through a time a stakeholder pushed back on your recommendation. What did you do?

What good sounds like: Acknowledges the pushback was valid in some respect. Shows whether they changed their position, held it, or found a third path — and why. Doesn’t reframe the stakeholder as wrong.

What weak sounds like: Hero narrative (“they came around to my view”). No nuance on what the pushback revealed.

7. How do you decide when to bring a problem up the chain versus solve it within your team?

What good sounds like: A clear heuristic. Examples of both directions. Acknowledges times they got the call wrong and what they learned.

What weak sounds like: “I try to solve it first” or “I always escalate.” No working principle.

Category 3: Judgment under ambiguity

Asked by the panelist evaluating decision-making — often the hiring manager or a senior leader from the candidate’s reporting line.

8. Tell me about a decision you made with incomplete information that turned out to be wrong. What did you do next?

What good sounds like: Picks a real decision (not a small one). Owns the call without externalizing blame. Names what they learned and how their decision process changed. Bonus if they can point to a later decision where they applied the learning.

What weak sounds like: Picks a small decision. Blames the information gap. No second-order reflection.

9. What’s a project or decision where you’d reverse yourself if you got to do it again?

What good sounds like: Real reversal, specific reason. Shows current thinking has updated. Bonus for “I still hold this part” — partial reversals are more honest than full ones.

What weak sounds like: “I’d just communicate more.” No actual decision reversal.

10. When you don’t have enough data to decide cleanly, how do you decide anyway?

What good sounds like: A working approach (reversibility test, smallest viable experiment, time-box, ask 2 specific people). Mentions when the approach broke down.

What weak sounds like: “I gather more data.” Doesn’t engage with the premise that the data isn’t going to be there.

11. What’s a hill you’d die on at your current/most recent job, and why?

What good sounds like: Picks something concrete and operational, not vague values. Can articulate why they’d hold the line. Acknowledges the cost of holding it.

What weak sounds like: Either picks something nobody disagrees with (“treating people well”) or picks something so contrarian it raises a flag.

Category 4: Cross-functional dynamics

Asked by a peer of the future hire — someone on the team they’ll work most closely with day-to-day.

12. Tell me about a time you disagreed with a peer on how to approach something. How did it resolve?

What good sounds like: Concrete disagreement, real resolution. Both parties’ arguments described fairly. Doesn’t make the other person the villain.

What weak sounds like: The other person comes off badly. No resolution mechanism named.

13. What’s a time you took on work outside your role to unblock someone else?

What good sounds like: Specific instance, specific person, specific work. Shows they noticed the unblock was needed. Doesn’t oversell as heroism.

What weak sounds like: Generic team-player line. No specific moment.

14. Describe how you’d onboard onto our team in your first 30 days. What would you prioritize?

What good sounds like: Asks clarifying questions about the team’s current state. Names 2-3 priorities (1:1s with peers, learning the codebase/process/customer, picking up a small ownership area). Acknowledges they don’t yet know what they don’t know.

What weak sounds like: Generic onboarding plan that could apply anywhere. No clarifying questions.

15. What’s something we said about the role or the company that you want to push back on or dig deeper into?

What good sounds like: Real pushback or question. Shows they were listening critically through the loop, not just being agreeable.

What weak sounds like: “No, it all sounds great.” Missed signal — either they’re not engaging deeply, or they’re not honest enough to raise concerns in a power-asymmetric setting.

Closing questions (shared, any panelist)

These don’t get scored against a single criterion — they’re calibration and culture-fit reads.

16. What’s a piece of feedback you’ve gotten in the last year that changed how you work?

17. What’s something you’re better at now than you were a year ago, and what made the difference?

18. What kind of manager helps you do your best work? What kind doesn’t?

19. What questions do you have for us that we haven’t already covered?

20. Is there anything we didn’t ask that you wish we had?

The scoring rubric

This is the half most teams skip and it’s where the actual triangulation happens.

For each question, each panelist scores their candidate 1-5 on the criterion they own:

| Score | Anchor |

|---|---|

| 5 | Strong evidence of the trait at a higher level than the role requires |

| 4 | Strong evidence at the level the role requires |

| 3 | Some evidence but not consistent or deep |

| 2 | Weak evidence; mostly generic answers or hedging |

| 1 | Negative signal; the answer revealed a real gap |

Critical: each panelist submits their scores to a shared scorecard before any group discussion. Submitting scores after the debrief defeats the entire purpose of using a panel.

The debrief reads from the scorecard. The team discusses deltas — where panelists scored the same answer differently — instead of trading impressions. If two panelists scored the technical-depth question as 4 and one scored it 2, the conversation is “what did the third panelist see that the others didn’t?” — not “I felt good about this candidate.”

This is what makes a panel actually triangulate. The questions distribute the evaluation. The scoring discipline keeps the evaluations independent. The debrief uses the data instead of replacing it.

How async screening changes the panel question set

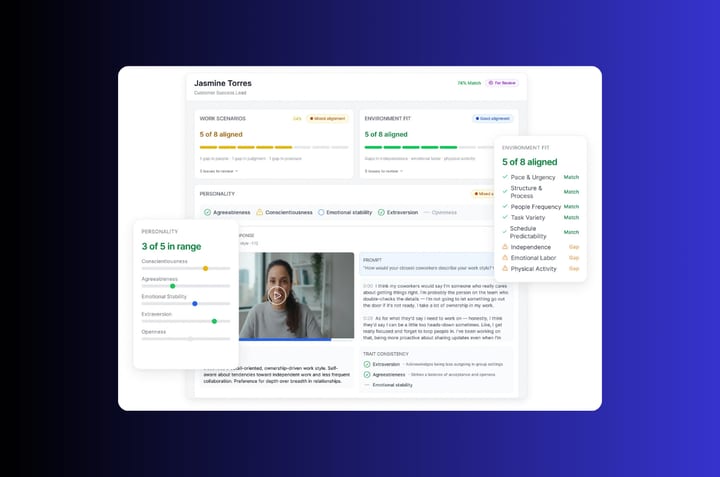

If you’re running a Truffle async screening interview before the live panel, three things shift in the question set above.

First, the technical-depth questions (1-4) can run in async. Each candidate records answers to the same questions, AI Match scores them on technical signal, and the live panel inherits a pre-scored shortlist instead of running these cold. The live panel then probes deeper on the criteria the screening already filtered for. Truffle Candidate Shorts compress each candidate’s screening answers into 30-second reviewable clips before the live round.

Second, the stakeholder-communication questions (5-7) often work better live because the candidate’s adjustments to the room are part of the signal. Keep these in the panel.

Third, the judgment and cross-functional questions (8-15) split. Some work async (the reflective ones); some work live (the ones where back-and-forth matters). Pick by whether the question’s value is in the answer or in the conversation around the answer.

The panel session shrinks from 60 minutes covering everything to 30-45 minutes covering what only a panel can cover. The candidate experience improves. The signal density goes up. The four-criteria triangulation still happens — just across two stages instead of one.

Frequently asked questions about panel interview questions

What are good questions to ask in a panel interview?

Good panel questions assign different criteria to different panelists. The engineer asks about technical depth, the PM asks about stakeholder communication, the security lead asks about judgment under ambiguity. The point isn’t to ask many questions — it’s to ask questions whose answers can be scored independently by panelists looking at different signals. A panel asking the same question through different mouths is wasting four-fifths of the format.

How many questions should a panel interview have?

5-8 core questions for a 45-60 minute panel. Each panelist owns 2-3 questions on their criterion. Going past 8 cuts into the candidate’s response time and produces shallow answers; going below 5 leaves criteria un-evaluated and pushes the decision back onto qualitative impressions. Closing rapport-and-culture questions sit outside the 5-8 count and don’t usually get scored against a criterion.

How do you score panel interview answers?

Use a 1-5 behavioral-anchored rubric per question, scored privately by each panelist before any group discussion. Scores get submitted to a shared scorecard. The debrief discusses deltas — where panelists scored the same answer differently and why — instead of trading impressions. The decision is driven by the aggregated scorecard, not by the loudest panelist’s read.

Should panelists ask the same questions or different questions?

Different questions, each tied to the panelist’s criterion. If three panelists ask variations of the same question, the candidate gets evaluated three times on one trait and zero times on the others — that’s not triangulation, that’s redundancy. Assign criteria before the interview starts and have each panelist ask the questions that test theirs. The same logic applies to follow-up questions: stay in your criterion lane.

What’s the difference between panel interview questions and structured interview questions?

Structured interview questions are about the discipline of asking every candidate the same questions in the same order. Panel interview questions are about distributing those questions across multiple interviewers, each evaluating a different criterion. A good panel is structured by definition — same questions per panelist per candidate — and adds the cross-functional evaluation layer on top. The two aren’t alternatives; the best panels are structured panels.